Most businesses already know their customers want fast, accurate answers on messaging apps. The real challenge? Building the system that delivers those answers automatically, around the clock, without sounding like a robot reading a script. That’s where a well-designed AI conversational automation pipeline comes in. It’s the backbone connecting your AI models, data sources, and messaging channels into one coherent system. And with the conversational AI market projected to reach USD 41.39 billion by 2030, this isn’t a niche experiment anymore. It’s becoming standard infrastructure. Whether you run a dental clinic, a car dealership, or a boutique hotel, the principles are the same. You need a pipeline that understands what customers say, knows where to find the right answer, and delivers it on the channel they prefer. This guide walks you through every layer of that system, from architecture to ongoing monitoring, so you can build something that actually works.

Defining the Architecture of an AI Conversational Pipeline

Think of your pipeline as a series of stations. A message arrives, gets interpreted, routes through logic, pulls relevant data, and sends a response. Each station has a job, and if one fails, the whole experience breaks down. Your architecture determines how fast, accurate, and flexible your system can be.

A solid conversational automation pipeline has three broad layers: understanding (NLU), reasoning (LLMs and logic), and action (integrations and responses). Designing these layers to work together is the single most important decision you’ll make.

Core Components: NLU, LLMs, and Orchestration Layers

Natural Language Understanding (NLU) is your front door. It parses incoming messages, identifies intent, and extracts entities like dates, product names, or account numbers. Without strong NLU, everything downstream gets garbage input.

Large Language Models handle the heavy lifting: generating responses, summarizing documents, and reasoning through ambiguous requests. But they need an orchestration layer to stay on track. That layer manages conversation state, enforces business rules, and decides when to call an API versus when to hand off to a human agent. Think of orchestration as the traffic cop between your AI brain and your business logic.

Selecting the Right Tech Stack for Scalability

Your tech stack should match your growth trajectory. A beauty salon with 200 monthly conversations doesn’t need the same infrastructure as a financial services firm handling 50,000. Start with what you need now, but pick tools that won’t box you in later.

Platforms like Wexio offer no-code visual flow builders that let you design conversation logic with AI cards, conditional branching, and version control. That means you can start simple and layer in complexity as your volume grows. The key question isn’t “what’s the most powerful tool?” It’s “what can my team actually maintain?”

Data Engineering and Knowledge Base Integration

Your AI is only as good as the information it can access. A beautifully designed conversation flow falls flat if it can’t pull the right answer from your knowledge base. Data engineering is the unsexy but critical work of making your information AI-ready.

Implementing Retrieval-Augmented Generation (RAG)

RAG solves a fundamental problem: LLMs don’t know your business. They know the internet. RAG lets you ground AI responses in your own data by retrieving relevant documents before generating a reply.

Here’s the basic flow: a customer asks a question, your system searches a vector database of your documents, retrieves the most relevant chunks, and feeds them to the LLM as context. The model then generates an answer based on your actual policies, pricing, or product specs. This approach dramatically reduces hallucinations and keeps responses accurate.

Structuring Unstructured Data for AI Consumption

Most business knowledge lives in messy formats: PDFs, email threads, Slack messages, and scattered Google Docs. Before RAG can work, you need to clean and chunk that data.

Break documents into logical sections. Add metadata like topic tags, dates, and source labels. Use consistent formatting so your retrieval system can rank results properly. This is grunt work, but it pays off every single day your pipeline runs. Review your knowledge base quarterly to remove outdated information. Stale data is worse than no data.

Designing Intent Recognition and Dialogue Flow

Intent recognition is where your pipeline decides what the customer actually wants. Get this wrong, and you’ll send people down frustrating dead ends. Get it right, and conversations feel surprisingly natural.

Mapping User Journeys and Decision Trees

Start by listing your top 10 to 15 customer intents. For a car dealership, that might include booking a test drive, checking service availability, and asking about financing. For a healthcare clinic, it’s appointment scheduling, insurance questions, and prescription refills.

Map each intent to a conversation flow. What information do you need to collect? What’s the ideal outcome? Where does the conversation branch? Visual flow builders with industry-specific templates give you a canvas for this exact work. You drag, drop, and connect nodes instead of writing code.

Handling Edge Cases and Fallback Mechanisms

No AI handles every message perfectly. Your pipeline needs clear fallback rules for when things go sideways. Set specific triggers for human handoff: negative sentiment detection, two or more failed intent matches, or an explicit request to speak with a person.

Don’t just say “I didn’t understand.” Give users options. Offer a menu of common topics or ask a clarifying question. The goal is to keep the conversation moving, not to pretend your AI is smarter than it is. Review chat transcripts regularly as free user research. They’ll reveal logic gaps and unexpected questions faster than any analytics dashboard.

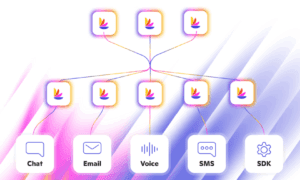

Developing API and System Integrations

A chatbot that can’t do anything is just a fancy FAQ page. Real value comes from connecting your pipeline to the systems that run your business. That means APIs, webhooks, and data flowing in both directions.

Connecting to CRM and ERP Systems

Your conversational pipeline should read and write to your CRM. When a customer asks about their order status, the bot queries your system in real time. When a new lead provides their email, it creates a contact record automatically.

This two-way sync eliminates double data entry and keeps your team informed. Industry research shows that well-implemented chatbots can reduce customer service costs by 40-60% for enterprises, and a big chunk of those savings comes from eliminating manual data transfer between systems. Make sure your tools connect to your existing CRM and marketing stack so data flows without friction.

Implementing Webhooks for Real-Time Action Execution

Webhooks let external events trigger actions in your pipeline. A payment confirmation fires a webhook, and your bot sends a receipt on WhatsApp. A new appointment booking triggers a confirmation message on Telegram.

This real-time responsiveness is what separates a basic chatbot from a true automation pipeline. As illustrated in the rise of conversational commerce, AI-driven processing can reduce resolution time by up to 75%, cutting what used to take weeks down to days. That speed comes from systems talking to each other instantly, not waiting for a human to copy-paste information between tabs.

Security, Privacy, and Compliance Standards

Conversational data is sensitive. People share names, phone numbers, health concerns, and financial details in chat. Your pipeline must protect that information at every stage, from ingestion to storage to analysis.

A staggering 71% of business and technology professionals say their organizations have invested in bots for customer experience support. With that adoption comes serious responsibility. You need encryption in transit (TLS 1.3 minimum) and at rest (AES-256). You need GDPR compliance if you serve EU customers, and SOC 2 readiness if you’re in B2B SaaS. Choose platforms that run on EU-hosted infrastructure with end-to-end encryption if your industry has strict data residency requirements.

Anonymizing PII in Conversational Logs

Every conversation log is a potential liability if it contains unmasked personally identifiable information. Build PII detection into your pipeline from day one. Automatically redact or mask names, emails, phone numbers, and account IDs before logs hit your analytics database.

Use regex patterns for structured PII like phone numbers and credit card formats. For unstructured PII like names mentioned mid-sentence, NER (Named Entity Recognition) models do the heavy lifting. Store anonymized logs separately from raw data, and set retention policies that match your compliance requirements. This isn’t optional: it’s table stakes.

Continuous Monitoring and Optimization Strategies

Launching your pipeline is step one. Keeping it sharp is the real work. Conversations evolve, customer expectations shift, and your AI will drift if you don’t actively monitor it. The industry is moving toward conversational AI that is predictive, not just reactive, and your monitoring strategy should reflect that forward-looking approach.

Establishing KPIs for Conversational Success

Track these metrics from the start:

- Containment rate: Percentage of conversations resolved without human handoff

- Intent recognition accuracy: How often your NLU correctly identifies what users want

- Average resolution time: From first message to issue resolved

- Customer satisfaction (CSAT): Post-conversation ratings or sentiment analysis

- Fallback rate: How often the bot fails to match an intent

Use median values instead of just averages for resolution time and satisfaction scores. Outliers can skew your data and hide real problems.

Iterative Fine-Tuning Based on User Feedback Loops

Set a weekly cadence for reviewing conversation transcripts. Look for patterns: where do users drop off? What questions stump the bot? Which flows have the highest handoff rates?

Feed these insights back into your pipeline. Update intent training data, refine your RAG knowledge base, and adjust dialogue flows. The businesses that win will be the ones that treat their pipeline as a living system, not a one-time build.

Building Your Pipeline: The Path Forward

An AI conversational automation pipeline isn’t a single product you buy off the shelf. It’s a system you design, connect, and continuously improve. The architecture, data layer, intent logic, integrations, security, and monitoring all need to work together.

Start small. Pick your highest-volume customer interaction, automate it well, and expand from there. Wexio’s free tier with 100 operations per month and no credit card required gives you a low-risk starting point to test your first flow.

The businesses that build these systems now won’t just cut costs. They’ll create faster, more consistent customer experiences across every messaging channel. And that’s a competitive advantage that compounds over time.