Enterprise digital infrastructure today is mostly built across distributed environments, where a significant portion of traffic originates from APIs, background services, and automated processes. Under these conditions, systems operate against a continuous, high-volume traffic baseline.

Security and reliability issues in this context rarely emerge without warning, as observable shifts in traffic behavior happen well before any system impact occurs.

Every request that arrives at an application endpoint reflects something about the client that sent it, such as its timing, its origin, and its behavior relative to others. Predictive traffic intelligence is built on the idea that these signals, observed consistently at the point of ingress, can surface early indicators of risk, abuse, or instability long before any downstream system registers a problem.

Why Traffic Behavior Precedes System Impact

Most security and observability tools begin extracting useful data only after a request has already entered the application stack. By then, resources are being consumed, and the window for early intervention has narrowed. Logs and traces are valuable for post-incident analysis, but they describe a system already under stress.

However, at the traffic layer, connection patterns, request pacing, and geographic distribution begin diverging from their baselines before application runtimes feel any pressure. That earlier signal is where predictive intelligence can extract greater value.

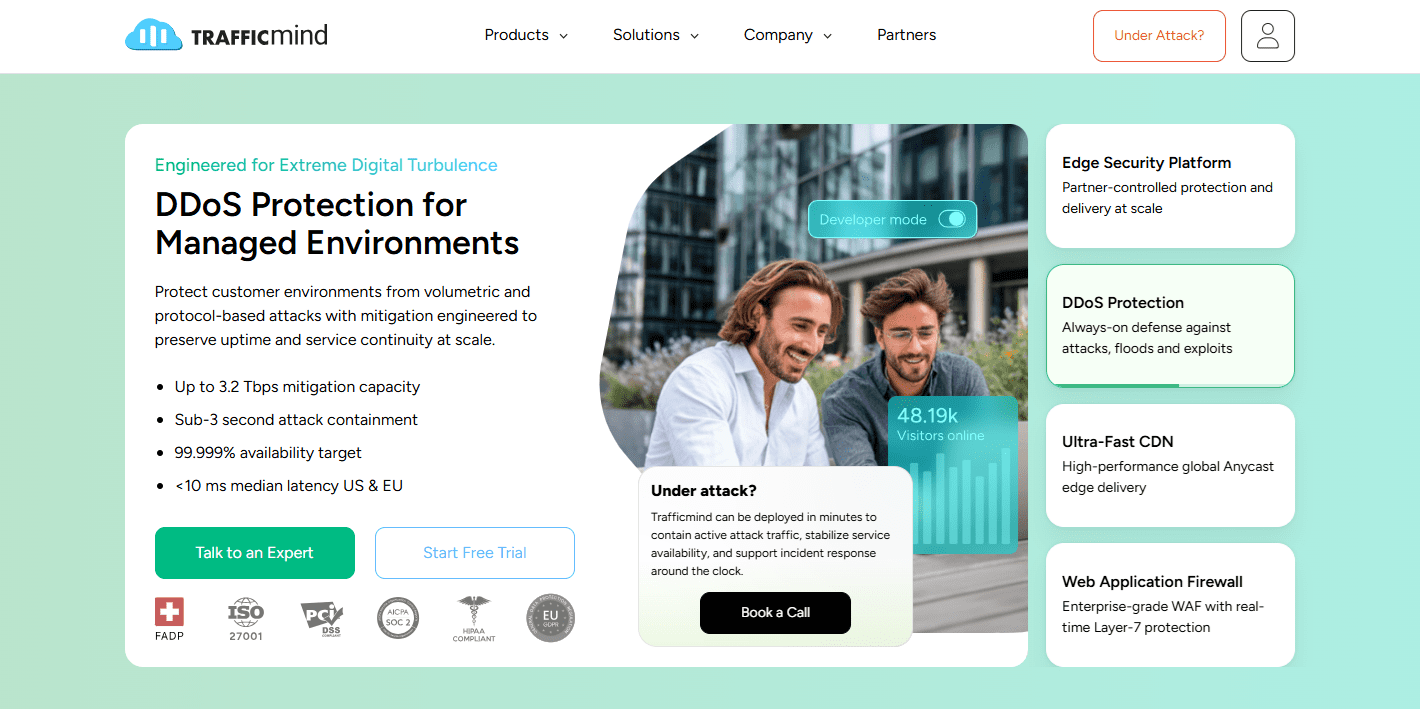

Trafficmind as an Ingress-Centric Security Platform

Trafficmind is built around the principle that traffic should be analyzed and controlled before requests are admitted into the application stack. The edge is not treated as a simple filter. Instead, it operates as a purpose-built corporate-grade security network that evaluates traffic before it reaches the kernel, operating system, or application layer.

Detection and enforcement happen in-line and in real time with no client-side challenges, and no decisions deferred to centralized scrubbing centers.

Behavioral and Statistical Detection

Trafficmind approaches detection through a combination of Layer 7 HTTP behavior analysis, statistical profiling, and machine learning.

Signatures and static rules are inherently reactive, they identify known patterns but miss attacks that don’t match a prior definition. So instead of relying solely on these detection mechanisms, the Trafficmind platform builds behavioral models that describe normal traffic patterns for each protected application.

Key elements include:

- Machine learning models that detect anomalies, profile traffic, and classify abuse

- Telemetry ingestion and aggregation built to handle high request volumes

- Real-time and regressive analysis, enabling both live detection and historical investigation

Application-layer attacks often look syntactically legitimate, and are thus only detectable using behavioral factors. That’s precisely what Trafficmind’s statistical detection approach is designed to surface, making it particularly well-suited to API-heavy and fintech environments.

Inline Enforcement with Predictable Outcomes

A detection model is only as useful as the enforcement layer behind it, and Trafficmind treats these two as distinct functions, each operating at the layer best suited to its purpose. This in turn produces enforcement behavior that remains predictable under any traffic condition.

That separation is reflected in how enforcement is structured:

- Layer 4 volumetric attacks are intercepted at the network interface before the application or operating system is ever involved. Packet headers provide the sole basis for filtering decisions

- Layer 7 application attacks are handled deterministically, with every enforcement decision driven by statistical classification rather than manual rule matching

- Packet handling stays scoped to header inspection throughout, keeping processing overhead minimal and avoiding unnecessary depth of inspection

Because filtering happens before the kernel and user space, mitigation activity consumes no CPU cycles and places no load on the operating system. Legitimate traffic passes through without encountering challenges, CAPTCHAs, or any form of client-side friction. Its path to the application is unaffected regardless of what mitigation is running in parallel.

Since mitigation and delivery don’t compete, the operational outcome is a system that performs consistently under load.

Web Application Firewall and Application Context

Generic firewall rules create a familiar problem: they block too much or too little, because they have no awareness of the application they are protecting. Trafficmind’s custom WAF (Web Application Firewall) is designed around application and API context, with enforcement shaped by business logic rather than fixed patterns.

This translates into three practical capabilities:

- WAF rules that reflect how the application actually behaves, not just how attacks are generically defined

- DDoS mitigation logic calibrated to application-specific traffic patterns

- Continuous rule tuning as the application evolves

This results in tighter enforcement with a lower false positive rate.

Real-Time Telemetry with Deterministic Log Access

Trafficmind retains detailed traffic data at scale, in a form that supports direct analysis without heavy aggregation that diminishes context and makes forensic work difficult.

Several capabilities support this:

- Real-time telemetry streams for live visibility into traffic behavior

- Historical queries over retained data, enabling retrospective investigation

- Fully customizable sampling logic, configurable per field, signal, or time window

- Full log export as customer-owned datasets

Customers are not dependent on pre-built dashboards or filtered views. Instead, every recorded event is accessible through query-level access. This simplifies forensic analysis, regulatory compliance, and internal ML training.

Trafficmind operates under the Swiss Federal Act on Data Protection (FADP), which applies strict privacy safeguards to all retained data. For regulated financial institutions, this deterministic logging and full data ownership approach simplifies audit readiness and regulatory reporting.

Network Design Optimized for Security Under Load

Trafficmind’s network is built to keep security enforcement stable and predictable at scale, without CDN latency becoming a limiting factor. Its key design characteristics include:

- Under 10ms latency across major regions in North America and Europe

- Capacity planned around enterprise production workloads

- Controlled, commercial-only traffic composition

This keeps mitigation, inspection, and delivery from competing for the same resources.

Business Model as a Technical Constraint

Trafficmind operates as a fully commercial, enterprise-focused service, with capacity planned and priced exclusively for paid usage. There are no cross-subsidies, nor are there experience-degrading controls used to manage load. From an architectural standpoint, this translates directly into deterministic performance and consistent enforcement policies.

Implications for Technology, Fintech, and Finance

The relevance of predictive traffic intelligence varies by industry, but its value is most pronounced where availability and data integrity carry regulatory weight.

For instance, in technology and SaaS platforms, early detection of abusive or unstable traffic patterns reduces operational risk.

For fintech and financial services, this means:

- Early detection of credential abuse and enumeration attacks

- Stable API performance during periods of anomalous traffic conditions

- Alignment with privacy, compliance, and data minimization requirements

Because the approach relies on behavioral statistics rather than inspecting packet contents, it remains effective against encrypted traffic where payload inspection is not possible and avoids the data handling concerns that complicate deep inspection in regulated environments.

Closing Perspective

Predictive traffic intelligence shifts the security posture from reactive to anticipatory. Rather than waiting for systems to signal distress, it treats observable changes in traffic as the first line of evidence.

In banking, payments, and capital markets infrastructure, where availability and explainability carry regulatory weight, that earlier visibility becomes a foundational control.