On February 27, 2026, PointFive released DeepWaste™ AI, an agentless optimization module designed to continuously improve efficiency across LLM services, GPU infrastructure, and AI data platforms. The launch is aimed at production AI teams that are discovering an uncomfortable truth: as systems scale, inefficiency becomes systemic, not localized.

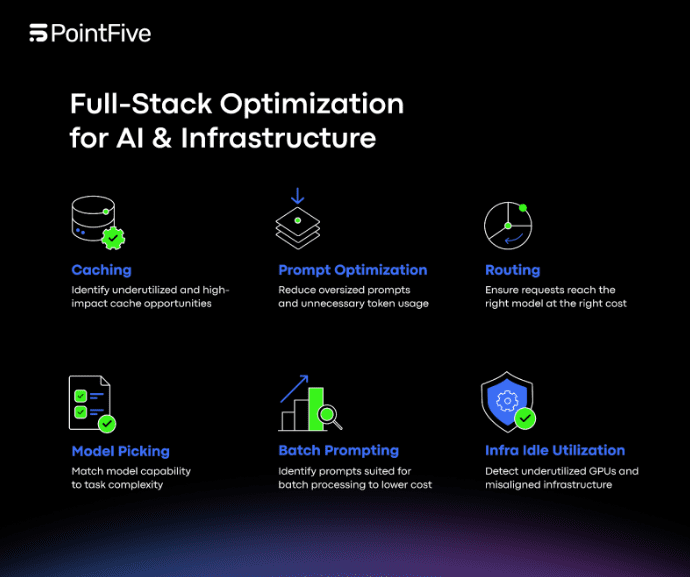

PointFive argues that production AI cost and performance are shaped by more than a single infrastructure decision. Model selection, token consumption, routing logic, caching behavior, GPU utilization, retry patterns, and data platform orchestration all shape outcomes. And because these layers interact, optimizing one piece in isolation often fails to address the real drivers of waste.

Where DeepWaste AI Fits in the Stack

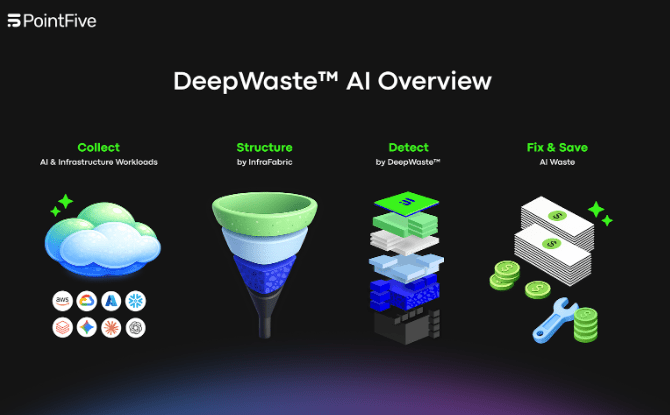

DeepWaste AI is positioned as an optimization layer that sits across the AI execution stack. Instead of requiring teams to instrument workloads or deploy agents, PointFive says the module connects agentlessly to the sources that describe AI behavior: cloud APIs, LLM service metrics, GPU telemetry, and billing systems.

The promise is operational: fewer moving parts to deploy, less risk of performance impact from instrumentation, and faster time-to-visibility, particularly for teams that already have complex pipelines and governance requirements.

Multi-Cloud and Direct API Connectivity

DeepWaste AI provides native connectivity across:

- AWS (Bedrock, SageMaker, and AI managed services)

- Azure (Azure OpenAI, Azure ML, Cognitive Services)

- GCP (Vertex AI and AI services)

- OpenAI and Anthropic direct APIs

This matters for platform teams because production AI rarely stays neatly within one boundary. Some teams consume managed services for speed and compliance; others use direct APIs for flexibility; many do both. Consistent optimization requires seeing the signals across these environments, not just within a single console.

GPU Efficiency as a First-Class Target

PointFive highlights GPU optimization as a core component, not an add-on. DeepWaste AI continuously identifies underutilized or idle GPUs, instance-type mismatches, OS and driver misconfigurations, and hardware-to-workload misalignment. These are platform-level issues that can persist unnoticed when teams focus only on application behavior or billing summaries.

In production settings, GPU fleets can be overprovisioned to meet peak demand, then left unchanged as usage patterns evolve. Instance types might mismatch the workload profile. Driver or OS misconfigurations can limit throughput. And hardware can be misaligned to the tasks it serves. DeepWaste AI is intended to flag those patterns as operational leakage that affects both cost and performance.

Data Platforms: Extending Beyond Inference

DeepWaste AI also extends optimization across AI data platforms through native support for Snowflake and Databricks, providing coverage from data ingestion through inference. PointFive frames this as necessary for “full-stack” optimization: upstream orchestration and data processing decisions can determine how often inference happens, how work is batched, and how workloads behave under load.

For platform teams, the implication is that optimizing AI is not only about the model endpoint but also about the pipelines that feed it.

Privacy-Preserving Defaults and Optional Depth

DeepWaste AI is designed to run by default using metadata, billing signals, performance metrics, and resource configuration data, without requiring access to raw inference logs. PointFive positions this as privacy-preserving and aligned with organizations that want to minimize data access requirements.

At the same time, the module supports optional inference-level analysis for organizations that choose to go deeper, enabling evaluation of prompt architecture and orchestration logic. Customers control the depth of analysis, which can help align the product’s operation with internal policy and governance constraints.

Four Layers of Detection With Actionable Outputs

DeepWaste AI structures and enriches every invocation with task classification, routing context, cost attribution, and infrastructure alignment signals. It detects inefficiency across four layers: model/routing intelligence; token/prompt economics; caching/reuse optimization; and infrastructure/operational leakage. Examples include model-task mismatch and downgrade opportunities, prompt bloat and context window overprovisioning, duplicate inference and cache miss inefficiencies, and retry-driven cost inflation and latency outliers.

Each finding includes a quantified savings estimate and implementation guidance, prioritized by financial impact and mapped directly to engineering and FinOps workflows. PointFive’s goal is to move teams from detection to remediation with measurable, trackable results over time.

The Operational Shift in AI Workloads

“AI workloads introduce a new category of operational complexity,” said Alon Arvatz, CEO of PointFive. “DeepWaste AI gives organizations the intelligence required to scale AI efficiently, across models, infrastructure, and data platforms, without sacrificing control.”

DeepWaste AI is now available to PointFive customers.