Navigating the Rise of Shadow AI in the Workplace

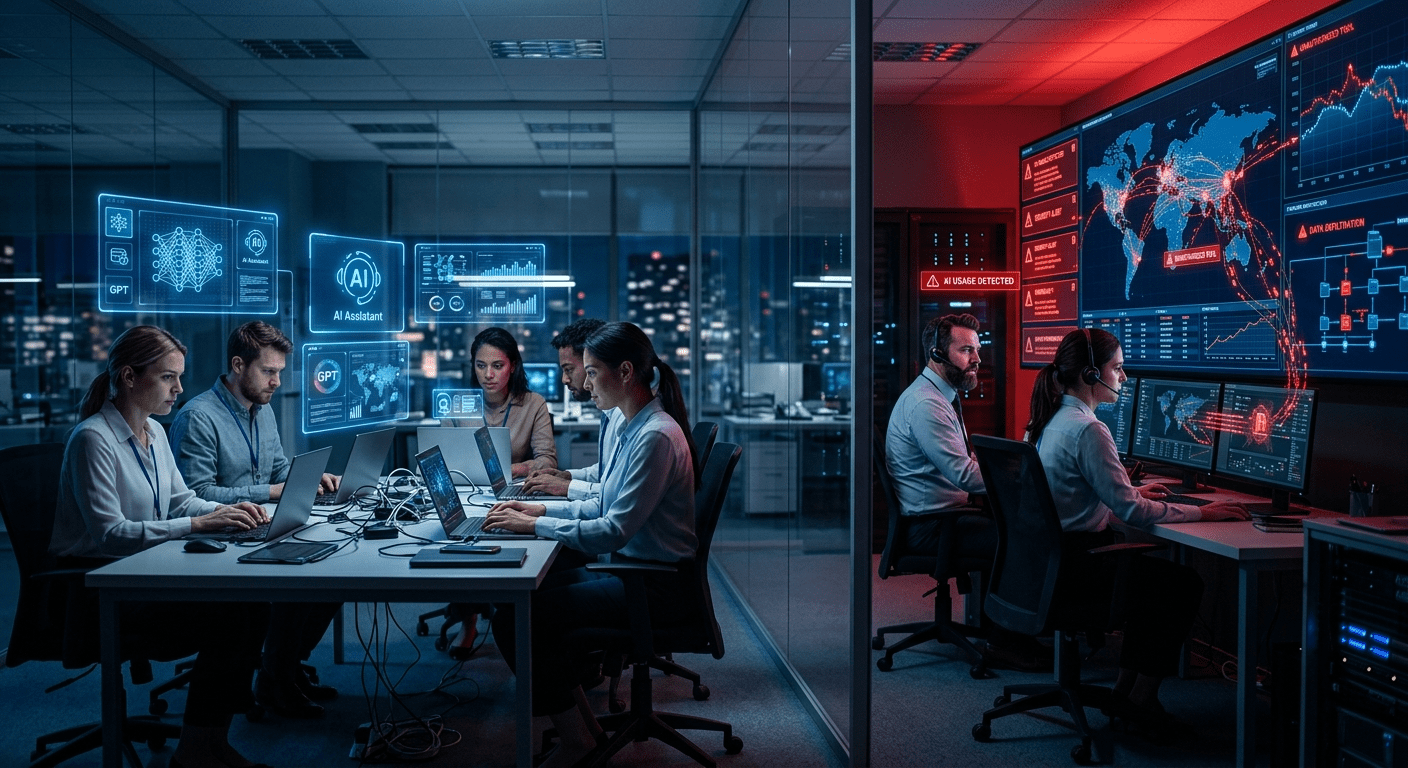

Artificial intelligence tools have become ubiquitous within businesses, accelerating workflows and enabling innovation across departments. Across industries, AI-driven applications are transforming how teams collaborate, analyze data, and deliver value. However, alongside sanctioned AI deployments, an increasing number of employees are adopting unauthorized AI applications- often referred to as shadow AI to accomplish their tasks. This surreptitious use of AI tools poses a unique challenge for IT leaders and executives: how to regulate these technologies without stifling the very innovation they enable.

Shadow AI refers to AI-powered software and platforms that employees use without formal approval or oversight from their organization’s IT or security teams. These can range from generative AI chatbots and code assistants to automated data analysis tools. A recent Gartner study found that by 2024, at least 30% of large enterprises will experience shadow AI incidents, which can lead to data leaks, compliance violations, and operational risks. The proliferation of shadow AI is fueled by the ease of access to AI tools, many of which require minimal setup and offer immediate productivity gains. Employees often turn to these solutions to bypass cumbersome approval processes or to address gaps in official workflows.

Addressing this balancing act demands a nuanced approach. Organizations cannot simply ban all unapproved AI tools without risking employee dissatisfaction and reduced agility. Instead, they must implement strategies that incorporate shadow AI into their governance frameworks. Companies like HelpMePCS are pioneering solutions that help firms manage AI adoption by providing visibility into employee usage patterns and integrating compliance checks seamlessly. These solutions enable organizations to stay ahead of potential risks while supporting innovation at the grassroots level.

Understanding the Risks and Opportunities of Employee AI Tools

The unauthorized use of AI tools can expose companies to various risks. Data security is paramount; when employees input sensitive corporate information into third-party AI platforms, they may inadvertently expose confidential data. According to IBM’s Cost of a Data Breach Report 2023, the average cost of a data breach is $4.45 million, underscoring the financial stakes organizations face if shadow AI leads to leaks. Beyond direct financial losses, data breaches can damage brand reputation and customer trust, often with long-lasting effects.

Moreover, shadow AI can create compliance headaches. Many industries operate under strict regulatory frameworks such as GDPR, HIPAA, or SOX. Using AI tools that have not undergone proper vetting may result in noncompliance, fines, or reputational damage. For example, if an employee uses an unapproved AI tool to process personal data without adequate safeguards, the organization may face severe penalties. Yet, at the same time, the agility and innovation provided by these AI resources can significantly boost productivity and competitive advantage. A McKinsey survey found that 63% of early AI adopters reported substantial improvements in business outcomes, highlighting the upside of AI empowerment.

A key to unlocking this paradox lies in fostering informed autonomy. IT departments, often seen as gatekeepers, should transition toward enablers, providing employees with approved AI toolkits and clear guidelines on usage. This approach aligns with insights from about T3 MSP, which emphasizes proactive managed service solutions that blend security with user empowerment. This shift not only mitigates risks but also encourages responsible innovation and collaboration between technical teams and end users.

Crafting a Balanced AI Governance Framework

To effectively regulate employee AI tool usage, organizations should develop a comprehensive governance framework that incorporates the following core components:

- Visibility and Monitoring: Implement tools that identify which AI applications are being used across the enterprise. Employing AI usage analytics enables IT to detect shadow tools and assess their risk profiles without intrusive policing. This visibility helps organizations understand usage patterns and identify potential vulnerabilities early.

- Risk Assessment: Evaluate the security, privacy, and compliance implications of employee AI tools. Prioritize mitigation strategies based on potential impact. Risk assessments should be continuous, adapting to new AI technologies and evolving threat landscapes.

- Policy Development: Create clear policies that define acceptable AI tool usage, data handling procedures, and reporting mechanisms for unapproved applications. Policies should be communicated clearly and updated regularly to keep pace with technological advances.

- Education and Training: Offer ongoing employee training programs to raise awareness about the risks and benefits of AI tools, emphasizing responsible usage. Training empowers employees to make informed decisions and fosters a culture of accountability.

- Integration with IT and Security Teams: Facilitate collaboration between IT, security, legal, and business units to ensure AI governance aligns with organizational objectives. Cross-functional teams can better balance innovation goals with risk management.

These measures contribute to a sustainable balance where innovation thrives under a managed risk environment. A 2023 Deloitte survey indicates that 73% of organizations with mature AI governance frameworks report improved innovation outcomes alongside reduced security incidents. This data highlights that effective governance does not hinder progress but rather supports it.

Encouraging a Culture of Responsible Innovation

Regulation need not be synonymous with restriction. Cultivating a culture that embraces responsible AI innovation can transform shadow AI from a threat into an asset. Leadership plays a vital role in setting the tone by encouraging experimentation within defined guardrails and recognizing teams that leverage AI effectively and securely. When employees feel trusted and supported, they are more likely to adhere to policies and contribute ideas for improvement.

Organizations should also consider establishing AI innovation labs or centers of excellence where vetted tools can be tested and scaled. This controlled environment allows for rapid iteration while maintaining compliance oversight. Through constructive dialogue and feedback loops between end-users and governance teams, policies can evolve in step with technological advancements. Such initiatives foster collaboration and reduce the impulse for covert AI adoption.

Furthermore, fostering transparency by openly communicating about AI risks and governance initiatives reduces the likelihood of employees resorting to covert AI adoption out of frustration or lack of alternatives. Regular updates, open forums, and accessible resources help demystify AI governance and build trust across the organization.

The Role of Technology Partners in Managing Shadow AI

Technology partners and managed service providers (MSPs) are increasingly critical in helping organizations navigate the shadow AI landscape. Expert MSPs bring specialized knowledge in AI security, compliance, and user behavior analytics, offering tailored solutions that adapt as the AI ecosystem evolves. They can assist in deploying monitoring tools, conducting risk assessments, and delivering employee training programs.

Innovative solutions like those provided by offer pathways for companies to stay ahead of shadow AI risks while empowering their workforce. By combining technical controls, policy frameworks, and a culture of responsible innovation, businesses can harness the full potential of AI without compromising security or compliance. These partnerships allow organizations to scale their AI governance efforts efficiently, freeing internal teams to focus on strategic initiatives.

Looking Ahead: The Future of AI Tool Management

As AI technologies continue to advance, the shadow AI phenomenon will likely intensify. Emerging trends such as generative AI, natural language processing, and AI-powered automation present both new opportunities and challenges for enterprises. The organizations that succeed will be those that proactively embrace adaptive governance frameworks, grounded in trust and continuous improvement.

The rapid pace of AI innovation means governance frameworks must be agile, incorporating feedback and data-driven insights to remain effective. Additionally, as AI becomes embedded in more business processes, transparency and explainability will be crucial to maintain regulatory compliance and user confidence.

Industry collaboration and standard-setting bodies will also play a role in shaping best practices for AI governance. Sharing knowledge and experiences can help organizations avoid common pitfalls and accelerate the development of robust frameworks.

In conclusion, the shadow AI crisis is not an insurmountable problem but a call to action. Effective regulation of employee tool usage requires a shift from reactive enforcement to proactive enablement, balancing risk with opportunity to fuel sustainable innovation in the AI era. By embracing transparency, fostering responsible use, and leveraging expert partnerships described by, organizations can turn shadow AI from a covert challenge into a catalyst for growth.