By 2026, AI agents will be embedded inside business apps and capable of completing real tasks when they operate with the right permissions and controls. Gartner’s forecast signals a sharp shift: 40% of enterprise applications are expected to include task-specific AI agents by the end of 2026, up from less than 5% in 2025.

This momentum is already reshaping leadership priorities. The focus is moving toward whether agents can take safe action across CRM, ERP, ticketing, and finance workflows without introducing operational risk. The advantage will go to teams that ship bounded agents with clear scope, least-privilege access, approvals for high-impact actions, and audit-ready logging, so automation scales without eroding trust.

What an “AI agent” actually is (and isn’t) in enterprise apps?

In enterprise apps, an AI agent is a system that can plan steps, use approved tools, and complete actions inside business software.

It can pull context from trusted sources, decide the next best step, and then create a ticket, update a CRM record, trigger a workflow, or draft an outbound message, often with a human approval checkpoint for higher-impact actions.

In production, the agent functions like a governed workflow operator. It has an assigned identity, follows defined policies, uses only approved tools, and records each decision and tool action in an audit trail. That focus on control is also shaping how platforms evolve.

Microsoft, for example, has highlighted multi-agent orchestration and management controls as part of scaling agents safely across an organisation.

The 5 agent use cases leaders are implementing first

These early wins share a pattern: clear triggers, bounded actions, and controlled write-back into core systems (so the agent does work, not just talk).

- IT service + employee support (ticket deflection + resolution)

Employee asks for help in Teams/Slack → Action: agent gathers context, proposes fix, creates/updates ticket only when needed → Systems: ITSM + knowledge base → Guardrail: approvals for privileged actions, full audit trail. Salesforce is explicitly positioning specialized agents for IT service workflows as part of its “agentic enterprise” framing.

- Customer support operations (faster case handling)

New case arrives → Action: summarise history, draft reply, recommend next step, update CRM fields → Systems: CRM + helpdesk → Guardrail: human approval before sending/closing.

- Sales and RevOps (account research → next-best actions)

Upcoming meeting / pipeline change → Action: pull account signals, generate call plan, log outcomes, schedule follow-ups → Systems: CRM + email/calendar → Guardrail: write-back limited to specific fields; auto-actions only for low-risk steps.

- Finance operations (exceptions + reconciliations)

Invoice mismatch / unusual expense → Action: collect evidence, classify exception, route for approval, prepare audit notes → Systems: ERP + document store → Guardrail: no payments/credits without explicit approval.

- Procurement + vendor risk (intake automation)

New vendor request → Action: check required docs, flag gaps, route to legal/security, create tasks → Systems: procurement + GRC/ticketing → Guardrail: policy checks + traceability for every decision.

The common thread: the agent’s “autonomy” is scoped to a job, not the whole business.

The “production pattern” that separates pilots from real deployment

Most agent pilots feel impressed because they talk. Production agents succeed because they can act safely, and that requires a predictable pattern teams can operate, audit, and scale.

A practical production blueprint looks like this:

- Orchestrator layer (the “brain + rules”): routes requests, enforces policies (what the agent is allowed to do), and decides when to ask for human approval.

- Tool layer (the “hands”): approved actions only, create/update tickets, update CRM fields, trigger workflows, generate documents. Keep tools narrow (single-purpose) so failures are contained.

- Context layer (the “memory”): trusted enterprise data (KB, docs, customer history) with permission-aware retrieval, no broad data dumping.

- Identity + access (the “badge”): the agent should have least-privilege access and clear ownership. Microsoft’s Entra Agent ID concept reflects this direction, assigning an identity to agents so security teams get visibility and control.

- Observability + evaluation (the “black box recorder”): logs, traces, tool calls, human overrides, and quality checks so you can diagnose issues and improve.

This is also why platforms are emphasizing multi-agent orchestration with human oversight: teams need agent “workgroups,” but still require clear control points.

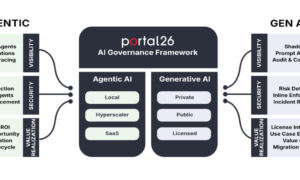

Risk is the real 2026 bottleneck (security, data, and “action safety”)

Agents introduce a different risk profile than chatbots because they don’t just respond, they can call tools and change systems.

That’s why most “agent in production” failures aren’t model-quality problems; they’re control problems: the agent writes to the wrong record, escalates a workflow incorrectly, leaks sensitive context, or gets manipulated into unsafe actions.

The highest-impact risks usually cluster into five areas:

- Prompt injection → unsafe tool use (the agent follows malicious instructions hidden in content)

- Over-permissioned access (agent can see or update more than it should)

- Data leakage (sensitive data pulled into prompts/logs or exposed in outputs)

- Untraceable decisions (“why did it do that?” with no audit trail)

- Action errors at scale (small mistakes replicated across thousands of records)

OWASP’s Top 10 for LLM applications puts prompt injection at the top for a reason, it’s the most common way LLM apps get steered into unintended behavior.

To operationalize safety, NIST’s Generative AI Profile (AI RMF companion) emphasizes structured risk management and controls that enable trustworthy deployment, not just “good prompts,” but governance, monitoring, and evaluation practices that hold up in real operations.

Practical guardrails that work: least-privilege identity, approval gates for high-impact actions, tool allowlists, logging/tracing of every tool call, and staged rollout with rollback.

Build vs buy: the decision framework leaders actually use

Most teams won’t “build an agent” in isolation, they’ll decide whether to buy a platform capability (Copilot/Agentforce-style) or build a bespoke agent workflow that fits their systems, data, and risk posture.

Buy is usually right when:

- the workflow is common (support triage, meeting prep, simple case summarisation)

- you can accept vendor constraints on tooling and observability

- time-to-value matters more than deep customisation

Build is usually right when:

- the agent must do deep integration and governed write-back across CRM/ERP/ITSM

- you’re in a regulated or high-risk environment

- you need fine-grained controls such as approval policies, evaluation harnesses, audit logs, and custom tool boundaries

When deep integration and audit-ready controls are required, teams often rely on production-grade AI development to design the agent workflow, tool boundaries, and monitoring needed for safe deployment.

- your workflow is a competitive differentiator (proprietary steps and data logic)

A simple “non-negotiables” checklist before choosing:

- Identity + least-privilege access (who/what is the agent allowed to be?)

- Tool boundaries (exactly what can it read/write, and where?)

- Evaluation + monitoring (how do you detect drift, failures, or hallucinated actions?)

- Human-in-the-loop design (where are approvals mandatory?)

- Post-go-live ownership (who maintains tools, policies, and prompts?)

A 90-day “Agent to Production” plan (the actionable close)

If you want a real agent in production, not a clever demo, treat it like a product launch with safety gates.

Weeks 1–2: Pick one workflow and define “done.”

Choose a single job with clear boundaries (e.g., ticket triage + draft response + suggested next step).

Set 3–5 success metrics: cycle time, deflection rate, SLA impact, error rate, and human override rate.

Weeks 3–5: Connect tools with least privilege.

Create narrow tools (one action per tool), define what the agent can read/write, and enforce permission boundaries. Establish logging from day one (inputs, tool calls, outputs, approvals).

Weeks 6–8: Build evaluation + failure handling.

Create an evaluation harness using real scenarios: edge cases, adversarial prompts, missing data, conflicting instructions. Add “safe failure” behavior: ask clarifying questions, escalate, or stop.

Weeks 9–12: Staged rollout + operational ownership.

Start with a small user group, monitor quality and action safety, and implement rollback. Assign a clear owner for tool updates, policy changes, and ongoing evaluation, because agents degrade when nobody owns them.

This is the practical response to the 2026 shift: Gartner expects agents to move into mainstream enterprise apps rapidly, so the advantage goes to teams that can ship controlled, auditable agents, not just impressive outputs.