For more than a decade, cloud innovation was synonymous with boundless scale. Companies expanded clusters, doubled compute, and built ever-larger distributed systems under the belief that growth alone would carry them forward. Today, that mindset is being rewritten. As global enterprises confront unprecedented data volumes, escalating cloud costs, and the rise of generative AI workloads, the industry is shifting toward a new standard: intelligent, cost-aware, reliability-first engineering.

This evolution is visible across every major cloud provider, from hyperscalers to enterprise collaboration platforms. What defines leadership in cloud computing today is not how much capacity an organization has, but how intelligently that capacity is architected. It is a moment shaped by a new generation of engineers who are redefining how distributed systems should function under real-world pressure.

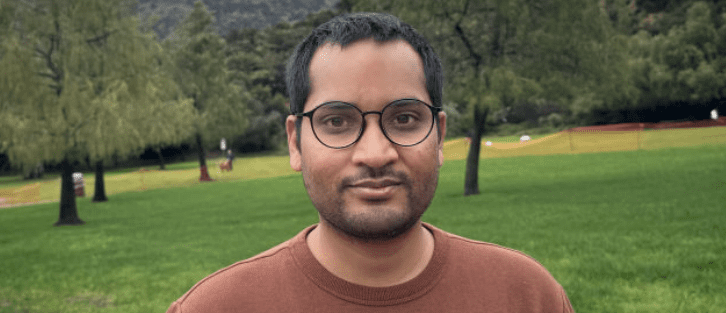

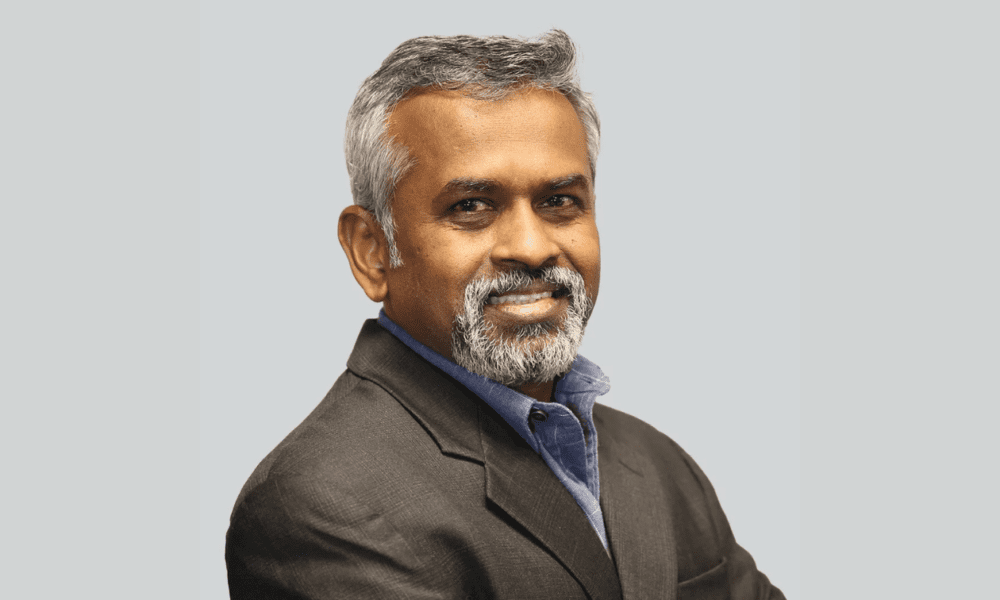

One of those engineers is Rishabh Gemawat, a Senior IEEE Member and an experienced developer at Amazon Web Services whose career spans more than fifteen years across AWS, Microsoft, Epic Systems, and TCS. His work reflects a broader industry transformation—one in which reliability, cost control, and security modernization are not afterthoughts but the foundation of modern cloud infrastructure.

“The biggest misconception,” Gemawat says, “is that cloud systems fail because of missing compute. In reality, they fail because of missing architecture. Scale without design is just wasted money and operational risk.”

His perspective mirrors a sentiment increasingly shared by cloud architects worldwide.

When Every Inefficiency Becomes Expensive

As organizations push toward AI-ready infrastructures, the cost of inefficiency has become impossible to ignore. It is no longer sustainable for global platforms to process redundant events, duplicate ingestion workflows, or leave identity synchronization running on legacy polling mechanisms that take hours—or even days—to complete.

Across the industry, teams are rethinking workflows with one guiding principle: every millisecond, shard, or storage write must justify its existence.

Engineers like Gemawat have spent years confronting this challenge firsthand. At AWS CloudTrail, he conducted deep architectural reviews of ingestion pipelines that silently consumed millions in compute without improving product accuracy. His redesign eliminated unnecessary event processing paths and prevented billions of redundant database writes each month.

“What we’re learning as an industry,” Gemawat explains, “is that efficiency isn’t an optimization strategy. It is the new architecture. When you remove waste at scale, you don’t just save money—you improve reliability.”

This philosophy is quickly becoming standard across hyperscale platforms.

When Identity Becomes the New Reliability Layer

Another defining trend is the race toward real-time identity propagation. As enterprises adopt zero-trust security models and AI-driven automation, any delay in user or access synchronization becomes a direct operational threat. A system that takes hours to update user state is no longer merely inefficient—it breaks workflows, harms compliance, and exposes platforms to unnecessary risk.

This was a major industry challenge during the explosive scaling period of digital collaboration platforms. Legacy pipelines updated user and group information through slow, batch-based models. The industry has since moved decisively toward event-driven identity systems capable of achieving sub-minute propagation.

During his years as a backend engineer on Microsoft Teams—now serving more than 300 million users—Gemawat, who also duals up as an IEEE Computer Society Member, helped redesign core identity synchronization pipelines to shift away from 24-hour latency toward real-time updates. Event-driven architecture, distributed caching, and optimized data-access strategies replaced outdated pull-based sync logic.

“In large distributed ecosystems, identity is the glue,” he says. “If identity breaks, nothing else works—security, messaging, permissions, analytics. You have to treat identity like a first-class reliability system.”

Enterprises across the globe have now adopted similar architectures, recognizing identity propagation as a critical reliability metric.

Security Modernization Becomes a Requirement, Not an Option

The shift toward secure, short-lived, and scoped service-to-service credentials is also transforming the engineering landscape. The old model of long-lived secrets, implicit service trust, and static keys is no longer viable for zero-trust environments. Companies are replacing them with OAuth2 frameworks, automated secret rotation, granular service boundaries, and standardized authentication libraries.

Between 2019 and 2021, Gemawat contributed to one of the industry’s largest service-to-service security upgrades within Microsoft Teams. The initiative replaced static secrets with dynamic identity tokens across hundreds of APIs and microservices. The shift reduced credential-related incidents, streamlined compliance, and generated meaningful cost savings by eliminating redundant verification patterns. The effort mirrors a global movement toward secure-by-default designs in distributed architectures.

“There is no such thing as a harmless credential,” Gemawat notes. “If a system trusts too broadly, that is a systemic flaw. Modern cloud environments demand narrow trust and constant verification.”

This perspective now shapes how enterprises build new AI and cloud-native systems.

Cloud Engineering Meets Formal Theory

An emerging trend is the convergence of academic research with production engineering. As cloud scale pushes systems to their theoretical limits, distributed platforms increasingly rely on ideas from fields such as graph theory, synchronization models, and even chemical reaction networks.

Gemawat explored timing behaviors in reaction networks through research published at Caltech. Though far from everyday software engineering, it helped him develop intuition about synchronization, cascading delays, and distributed timing, concepts that quietly underpin high-scale cloud architectures.

“Large systems behave a lot like scientific ecosystems,” he explains. “To design them properly, you need to understand propagation, timing, and stability. Those principles are universal across disciplines.”

This blending of theory and practice is becoming common as cloud infrastructure supports increasingly complex AI workloads.

The future of enterprise infrastructure is clear: systems must be intelligent by design, cost-efficient by default, secure by architecture, and observable at every layer. Batch-driven workflows will continue to disappear, replaced by streaming-first, event-driven, real-time platforms.

Engineers like Rishabh Gemawat embody this movement, not because they exist at its center, but because their work reflects the architecture-first mindset reshaping the industry. In parallel with his industry work, he also serves as an editorial board member and peer reviewer at SARC Journal of Economic Intelligence Technology and Journal of Multidisciplinary, engaging directly with emerging research in distributed systems and applied computing.

“In the past, we celebrated the size of our systems,” he says. “Today, we celebrate how precisely they work. The future of cloud computing isn’t bigger, it’s smarter.”

As AI becomes a core part of every enterprise workflow, the hidden work of reliability engineers and distributed systems architects will be what keeps the entire digital economy running. It is an evolution driven not by hype, but by the quiet discipline of engineers who understand that the strongest infrastructure is the one users never notice.