AI no longer feels experimental; it feels routine. Employees paste emails into AI assistants for tone refinement, analysts upload reports for summarization, and HR teams process resumes for skill matching. While none of these actions seems extraordinary, that is precisely the issue: when AI becomes embedded in everyday workflows, exposure risks also become normalized. The danger does not come from dramatic system failures. It comes from small, repeated decisions made without a structured framework.

Sensitive information rarely leaks through one major event. It leaks through convenience. This is why organizations must rethink how daily AI usage intersects with document control.

The Illusion of Harmless Uploads

Most AI interactions begin with a simple step: sharing information. Because the file feels routine, the content internal, and the task minor, these daily interactions rarely trigger a privacy alarm; instead, teams tend to treat them as ordinary operational files rather than strategic assets.

However, everyday documents often contain hidden layers of sensitivity:

- Embedded personal data in resumes

- Confidential clauses in contracts

- Internal pricing notes in proposals

- Unreleased product metrics in reports

- Annotated financial projections

From a governance perspective, every document shared with AI expands the organization’s exposure footprint. The problem is not malicious behavior, It’s unstructured usage.

Why Everyday Use Is More Dangerous Than Occasional Use

Organizations tend to focus on high-risk scenarios: proprietary code, trade secrets, and merger documents.

However, routine AI usage poses a broader threat because it happens continuously. Each upload may seem insignificant. In aggregate, they create patterns of exposure.

Without a structured privacy-first AI workflow, companies lose visibility into:

- What types of documents are processed

- Who is uploading them

- Whether sensitive sections are removed

- How compliance obligations are maintained

The risk grows quietly over time, rooted in a simple reality: AI does not distinguish between safe and unsafe inputs—users must.

The Missing Layer in Modern Workflows

Most AI adoption strategies focus on tool selection. Few address preparation.

Before AI processes a document, there should be an intentional review stage. This stage is known as pre-AI processing. Pre-AI processing is not a technical function. It is a governance checkpoint. Unlike many free tools with ambiguous data policies, KDAN PDF ensures professional-grade security through GDPR compliance and ISO certifications, providing a trusted environment for your sensitive information.

It involves:

- Identifying sensitive content

- Redacting personal data

- Removing confidential pages

- Isolating sections suitable for processing

- Preserving compliance requirements

Without this formalized layer, organizations are left with individual judgment—an unreliable strategy at scale. By formalizing it, AI usage moves from a potential liability to a structured, defensible workflow.

Everyday Roles, Everyday Risks

To understand how ordinary usage creates exposure, consider the following professional scenarios.

1. HR Teams Screening Applicants

An HR specialist uploads 200 resumes into an AI tool for skill categorization.

Resumes contain:

- Home addresses

- Phone numbers

- Identification details

- Employment history

While categorization improves speed, sensitive information travels with every file. A more mature approach introduces automated redaction before AI processing. Using tools such as KDAN PDF, teams can remove repetitive personal identifiers in bulk, allowing the AI to analyze qualifications without exposing identities.

This protects applicants and reduces regulatory risk.

2. Sales Teams Refining Proposals

A sales manager uploads a client proposal to improve tone and clarity. The document includes internal pricing structures and margin calculations in the appendix. If uploaded in full, the AI receives data beyond what is necessary.

Pre-AI processing allows the sales team to remove internal calculation pages before refinement. Page-level control ensures that automation works only with what is strategically appropriate.

The goal is not limitation. It is selective exposure.

3. Researchers Preparing Industry Reports

A researcher shares a draft white paper with AI for structural improvement. The document contains confidential interview transcripts in later sections. Without review, those transcripts become part of the input.

Through page editing within KDAN PDF, the researcher can isolate sensitive interviews before processing. The AI assists with formatting and clarity while protected data remains internal.

4. Finance Departments Conducting Analysis

Finance professionals frequently rely on AI to restructure reports. Yet internal notes, forward-looking projections, and strategic adjustments are often embedded within those documents. Removing internal-only commentary before processing protects competitive positioning.

Across these diverse scenarios, the principle remains the same: pre-AI processing transforms AI from a blind processor into a controlled assistant, ensuring that automation serves the professional’s goals without overstepping their boundaries.

Structured Safeguards vs. Reactive Fixes

The difference between mature and immature AI adoption can be summarized in one distinction: structure versus reaction.

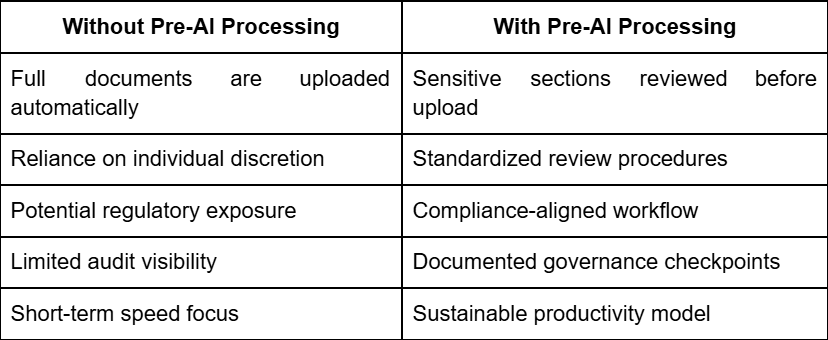

Below is a strategic comparison:

This table does not represent technical complexity but reflects organizational discipline.

Why Compliance Is Now Operational, Not Legal

Compliance is no longer just a department you visit; it is a discipline embedded in how workflows are designed. When AI tools are used daily, regulatory alignment cannot be an afterthought. Data privacy laws increasingly hold organizations accountable not only for breaches, but for negligence in handling sensitive information.

Embedding tools like KDAN PDF into document workflows enables operational compliance. Automated redaction, page isolation, and structured file control help ensure that data governance is proactive rather than reactive. The result is not just protection from penalties. It is institutional credibility.

The Psychological Shift Required

The true evolution of AI productivity lies in a simple mental shift—moving from asking ‘can AI assist me?’ to ‘how much should I reveal?’

This distinction redefines the relationship between user and tool—transforming AI from an unmonitored processor into a collaborator operating within defined boundaries.

Building a Privacy-First AI Workflow for Daily Use

A privacy-first AI workflow is not reserved for high-security industries. It is relevant for any team interacting with documents. It includes:

- Clear guidelines on document preparation

- Defined redaction standards

- Page-level control before sharing

- Repeatable review processes

- Centralized document management tools

KDAN PDF supports this approach by serving as the structured layer between document creation and AI interaction. It ensures that everyday tasks—editing, summarizing, formatting—occur within boundaries defined by the organization.

The Long-Term Value of Discipline

Beyond mere security, controlled workflows provide a framework for long-term resilience—protecting brand reputation, preserving client trust, and ensuring regulatory compliance. When every document is prepared with intentionality, governance becomes a byproduct of daily work rather than a reactive hurdle. This disciplined approach to AI does not just reduce exposure; it creates a lasting competitive advantage.

A Forward-Looking Perspective

As AI becomes inextricably linked to our daily work—drafting our emails, reviewing our contracts, and assisting our most critical decisions—the question is no longer about adoption, but about structure.

Every day use does not have to mean everyday exposure. By implementing a structured document control layer like KDAN PDF, organizations can transform their pre-upload pause into a strategic checkpoint. Whether it’s redacting sensitive details or removing confidential pages, this intentional preparation ensures that while AI productivity remains powerful, it also remains profoundly responsible.

Designing this gatekeeper habit may be the most valuable investment your organization makes in the AI era.