Scroll through your timeline right now, and you’ll see someone hyping up a new model with “unmatched parameters” or “insane results.”

But let’s be honest. If you have business KPIs to hit or code to ship, you don’t care about a model’s theoretical ceiling. In actual production, there’s only one metric that matters: the waste rate.

How many broken iterations did you have to generate just to get one usable image for a tweet or a viable clip for a promo video? Rolling the dice ten times to get one decent outcome means you’re playing with a toy. A real tool gives you control.

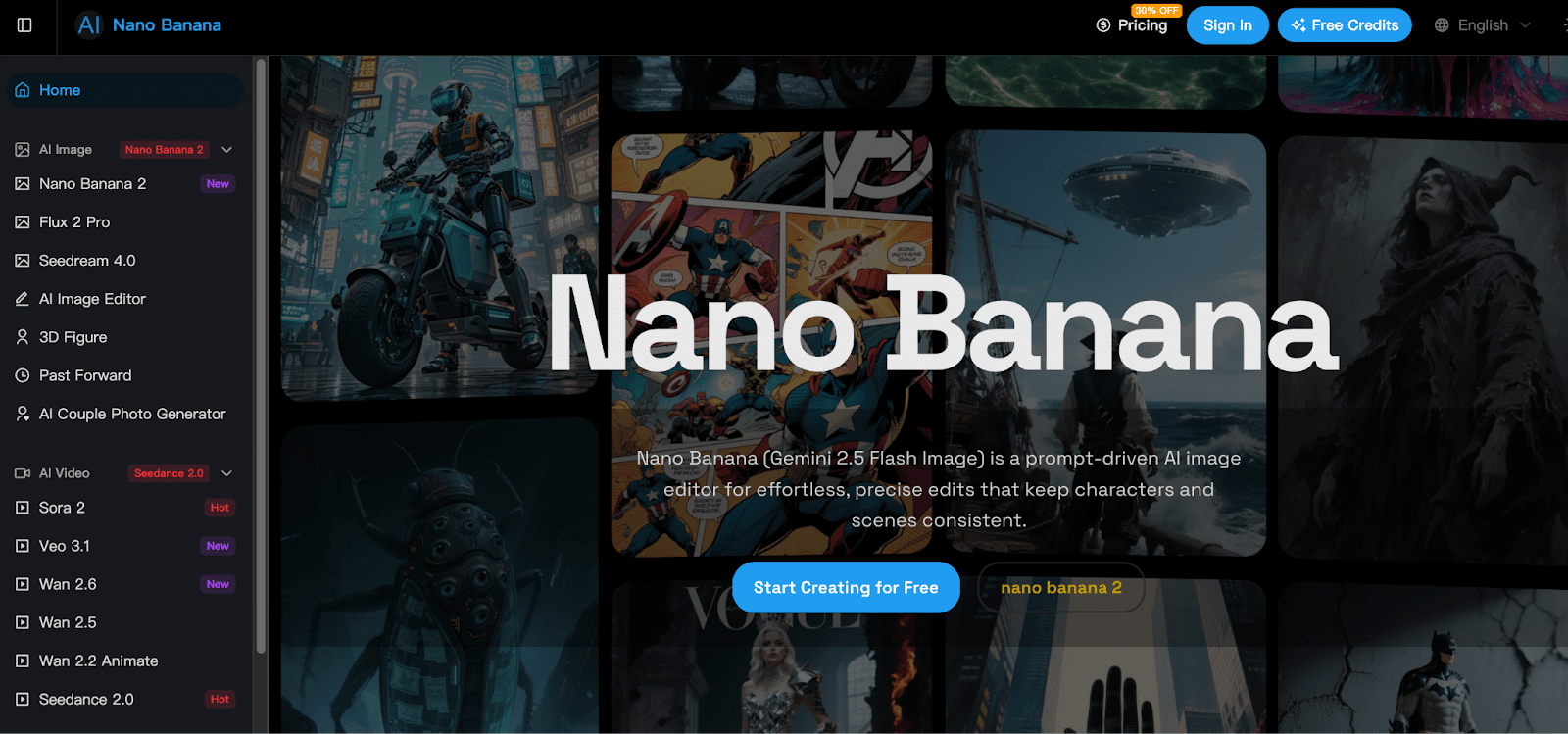

That’s exactly why, after burning time on countless single-model websites, I finally consolidated my workflow into one AI Image and video generation platform. Let’s skip the vanity metrics and talk about how a real pipeline actually works here.

The Breakpoint: Moving Past the Blind Box

You know the headache: you dial in a perfect image in Model A, try to tweak it in Model B, and it’s a complete disaster. Every model speaks a slightly different language.

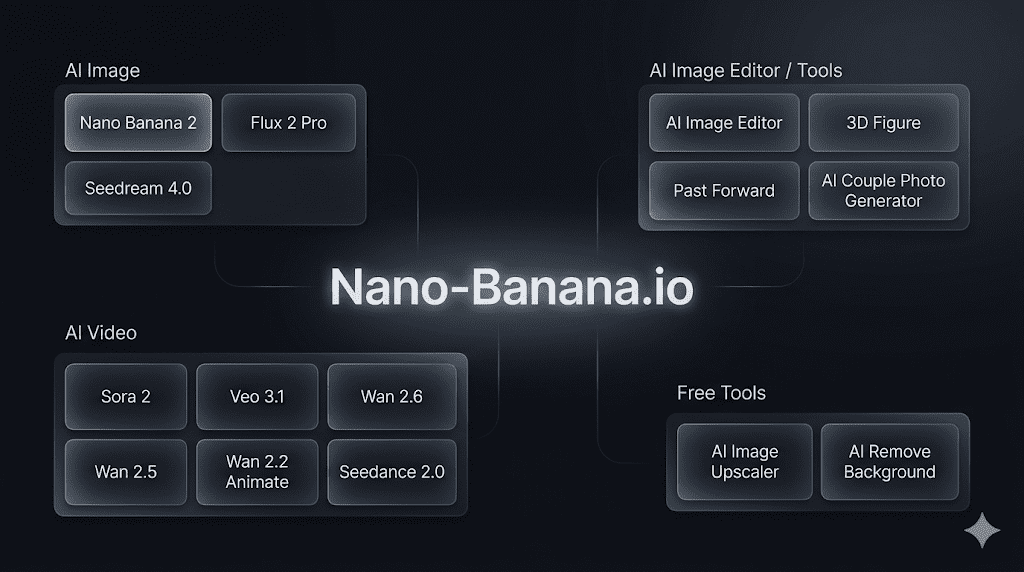

The smartest move this platform made wasn’t forcing you into a single generator. Instead, it serves up an entire ecosystem. Besides the engines I’ll dive into below, the backend actually hooks into an incredibly rich library of other cutting-edge video and image generation models. It’s a one-stop setup that saves you the mental tax of hopping between seven different tabs.

The foundation of this ecosystem relies on three core pieces.

Control Dictates Productivity

The Engine: Nano Banana 2

The first thing you notice about Nano Banana 2 is that it understands plain English.

Throw a complex business prompt at an older AI, and it usually hallucinates the loudest keyword while ignoring your layout entirely. But in real product scenarios, foreground proportions and lighting angles matter a lot more than “epic cinematic lighting.” Nano Banana 2 excels at parsing syntax. You don’t need to stack mystic spells to get what you want, and the straight-out-of-the-box usability is frighteningly high.

The Native Protocol: banana prompts

If you live inside generation tools, prompt-testing is the biggest time thief. The real heavyweight weapon here is banana prompts.

This native prompting system isn’t about memorizing vocabulary lists—it helps you structure your intent. This means even if you call up different image models within this platform, sticking to this native logic keeps the output from going off the rails. You no longer have to learn a completely new dialect just because you switched rendering engines.

Static to Motion: Seedance 2.0 and Veo 3.1

Static images aren’t enough anymore. Right before launch, you usually need a couple of micro-videos for a demo.

The standard workflow is painful: download the image, open three other video generation sites, upload it, and pray the physics don’t distort. Within this ecosystem, that friction is gone. The system seamlessly integrates top-tier video engines like Seedance 2.0 and Veo 3.1.

You take your precisely prompted base image and throw it straight into physical extension. Veo 3.1 handles fluid dynamics and motion lighting consistency like a veteran. The entire process stays inside one terminal. Zero exporting, zero importing, and zero quality loss.

The Hunter’s Verdict: Who Is This For?

If you just want to generate a couple of anime wallpapers for your avatar, this system is overkill. There are plenty of lightweight toys out there for that.

But if you lead a visual team, or if you’re a founder responsible for your own go-to-market materials—and you need a single place to handle both static images and elite short-form video while pushing your waste rate to the absolute minimum—then this AI Image and video generation platform is worth an hour of your time.

An uncontrollable tool isn’t a tool at all. In business, your biggest cost is always time.