Businesses are investing heavily in AI, but many encounter the same obstacles: models that work fine in isolation but fail to scale in the real world, pipelines that cannot be reliably reproduced, and infrastructure never built for governed, production-ready systems. This creates a growing divide between AI experimentation and actual impact, often causing even the most promising projects to stall. Industry research confirms that scaling AI remains among the biggest barriers to value realization: according to Gartner, many CIOs struggle to translate early AI promise into outcomes at scale, balancing technological readiness with organizational needs.

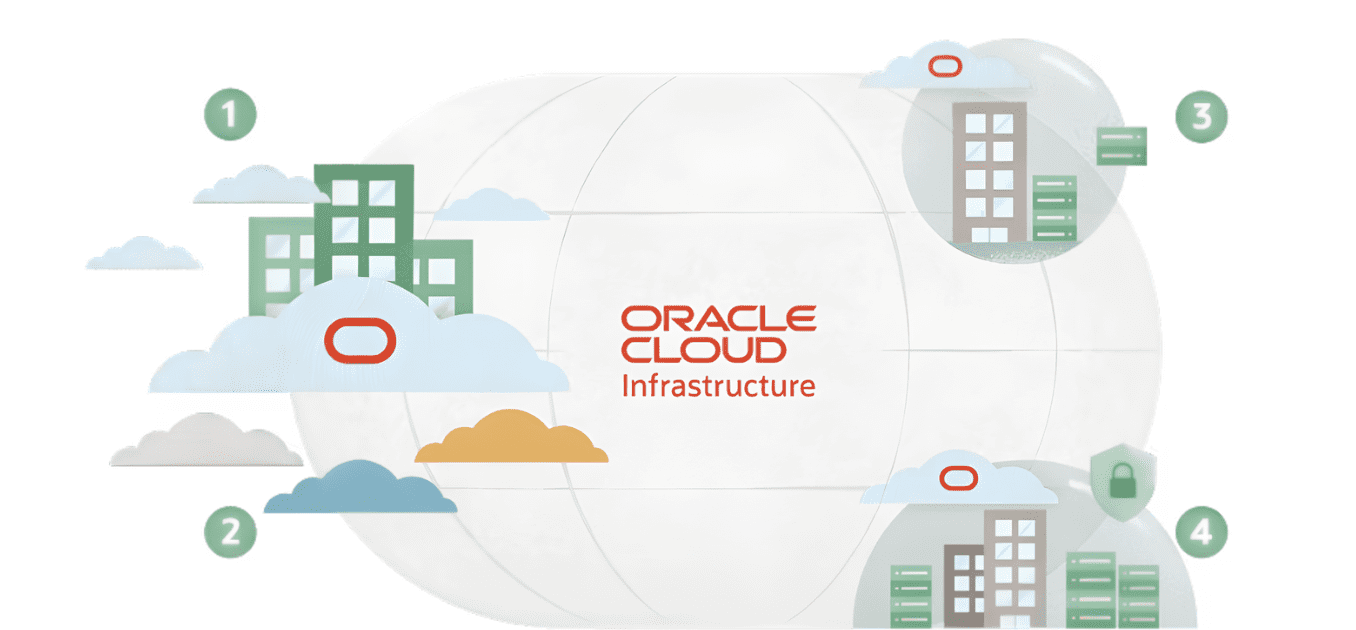

With its launch on the Oracle Cloud Marketplace, Valohai presents a much more practical way forward. Valohai is an enterprise MLOps platform designed to automate and version every phase of the machine-learning lifecycle, from data to experiments to deployment. This ensures that teams can easily reproduce their work and scale it without improvising their infrastructure.

When combined with the robust power and auditable compliance of Oracle Cloud Infrastructure (OCI), the platform delivers a system that finally allows organizations to run AI with the reliability and version-controlled processes needed for enterprise scale. For instance, customers like precision oncology firm Onc.ai have already used this combination to double pipeline speed and cut training costs by half. The key takeaway: achieving true AI scale requires disciplined infrastructure.

The Infrastructure Gap That’s Slowing Enterprise AI

While many organizations acknowledge the need for better AI infrastructure, few truly grasp how deeply the issues are rooted in their current systems. The challenge is seldom a single bottleneck. Instead, it stems from the cumulative burden of disconnected tools, inconsistent processes, and environments not designed for repeatable, governed machine learning.

This explains why AI prototypes succeed, but production systems often struggle. Studies show that the lack of reproducibility and governance in machine learning workflows is a fundamental barrier to trust and reliability in real-world deployments; problems that go beyond accuracy metrics and into engineering, version control, and traceability of workflows. A platform approach that enforces reproducibility, from data versioning to experiment logging, is not just desirable, it’s essential.

A model that performs well by itself frequently breaks down when teams try to replicate the results across different departments, regions, or tightly regulated data environments. Engineering focus shifts from innovation to mere maintenance. GPU spending increases without proportional results. Compliance demands multiply faster than the systems intended to manage them.

The result is predictable: ambitious AI initiatives falter, not because the underlying ideas are flawed, but because the operational foundation supporting them is too fragile for real-world scale.

Why the Valohai-Oracle Cloud Combination Matters Now

The Valohai-Oracle Cloud partnership directly addresses the operational friction that has held back enterprise AI. Oracle Cloud Infrastructure offers the necessary performance, regulatory readiness, and distributed architecture for sensitive, high-scale workloads. Valohai, in turn, provides the automation and reproducibility that unify these capabilities into a cohesive MLOps system. Together, they solve core enterprise problems of inefficient GPU usage, brittle pipelines, and data confined with heavily regulated environments, by offering a durable, full-lifecycle infrastructure for developing, validating and deploying AI.

Onc.AI: A Case Study in Scaling Precision Oncology

This combined solution is highly effective in fields where accuracy, security, and compliance are paramount. Take Onc.AI, a U.S. precision oncology firm. It develops imaging biomarkers that help clinicians and researchers understand how cancer patients react to treatment. This work involves processing thousands of longitudinal CT scans under strict regulatory conditions, a workload that rapidly exceeded the capacity of the company’s former infrastructure as it moved toward clinical deployment.

After their migration to Valohai on Oracle Cloud Infrastructure, the change was instant. Biomarker pipelines ran twice as fast on OCI’s A10 GPUs, training costs dropped by about half, and processes that previously needed manual supervision became fully reproducible and ready for auditing. What started as a delicate, research-focused setup transformed into a governed, production-ready system capable of supporting clinical trials and regulatory submissions.

Most importantly, the team could now focus its energy on advancing its science rather than maintaining infrastructure. Faster development accelerated validation cycles, lower costs allowed for more experimentation, and stronger governance provided confidence in moving toward real-world deployment. These are meaningful advantages in a sector where both time and accuracy directly impact patient health.

The Future of Enterprise AI

The Valohai-Oracle Cloud partnership signals a definite shift in enterprise AI: success increasingly depends less on isolated experimentation and more on infrastructure that operationalizes and governs AI across the business. According to Bain & Company, as AI workloads expand, enterprises must embed AI into foundational systems that support multiple use cases and maintain alignment with broader enterprise requirements.

Valohai’s availability on the Oracle Cloud Marketplace, supported by the results from Onc.AI, demonstrates that version-controlled, reproducible systems can deliver faster development, lower costs, and the auditable compliance necessary for modern deployments.

As organizations transition from exploring AI to demanding real accountability, the winners will be those who view AI as operational infrastructure, not just a series of isolated experiments. The road ahead is built upon platforms designed for scale, compliance, and enduring reliability.