The Problem: AI Video Tools Still Break When You Need Them Most

AI video tools have reached a point where demos look impressive, but real usage still feels unreliable.

You can generate a visually appealing clip in seconds, but the moment you try to build something structured, a product video, a sequence, or even a consistent character, the limitations become obvious. Faces subtly change. Motion doesn’t carry across scenes. Edits require starting over instead of building forward.

This gap between “what it shows” and “what it actually delivers” is why many creators still treat AI video as an experiment, not a system.

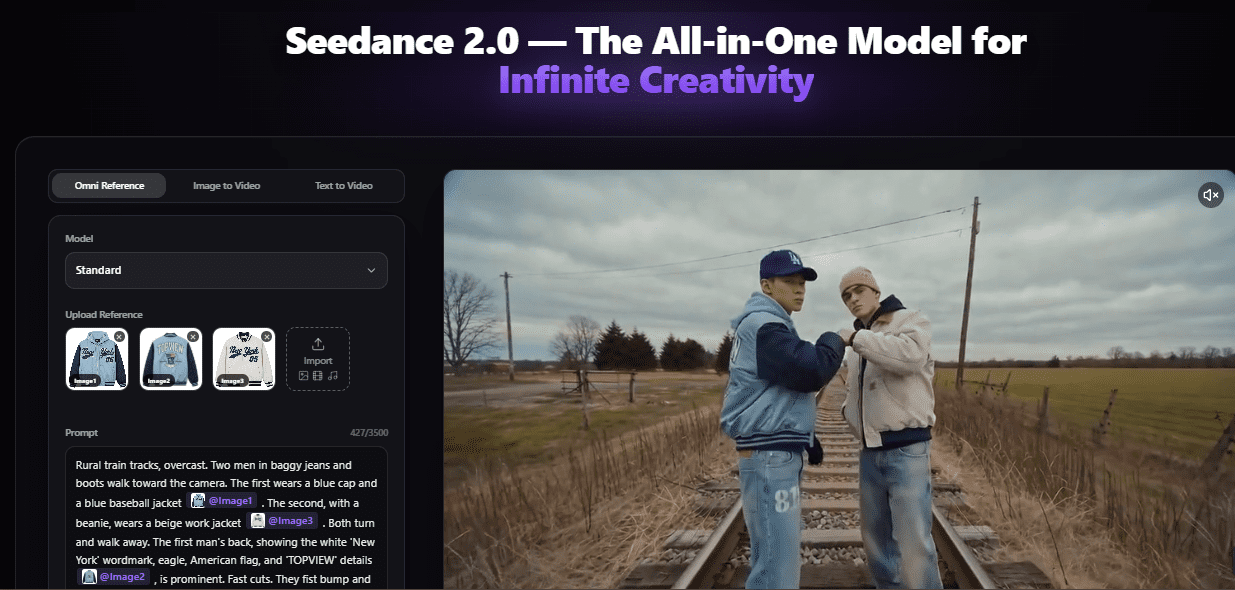

So when Seedance 2.0 positions itself as a multi-modal video model capable of combining images, video, audio, and text, the claim sounds familiar. Almost every new model promises the same direction.

The difference only becomes clear when you stop looking at outputs and start looking at control.

The Investigation: What Seedance 2.0 Is Actually Trying to Solve

The biggest shift in Seedance 2.0 is not just better visuals. It’s how inputs are handled.

Instead of relying on a single prompt, the model allows multiple forms of input to act as references. An image can define identity. A video can guide motion. Audio can influence timing. Text becomes instruction rather than the entire foundation.

This changes the generation process from “describe and hope” to something closer to “guide and refine.”

From what’s visible across implementations and examples, consistency has improved. Characters hold their appearance more reliably across frames. Styles don’t drift as aggressively. Scene transitions feel more intentional instead of stitched together.

Motion replication is another area where the model stands out. Instead of guessing movement, it can follow reference choreography or camera behavior. That makes it more usable for actual content creation, ads, storytelling, social clips, where motion matters as much as visuals.

Then there’s iteration. Most tools generate isolated outputs. Seedance 2.0 leans toward extending and modifying existing clips, which is a small but important shift. It reduces the need to restart from scratch every time something isn’t right.

These improvements don’t make it perfect. But they move it closer to something usable.

Where the Reality Still Falls Short

Even with these advancements, it’s not a frictionless system.

Control is better, but not precise. You can guide outputs, but you’re still negotiating with the model. Complex scenes still require multiple iterations. And high-quality generation comes with tradeoffs in time and compute.

But the bigger issue isn’t even technical.

It’s accessibility.

Most advanced AI models exist in environments where access is limited, fragmented, or inconsistent. You might be able to test them, but not build with them regularly.

That creates a disconnect.

People know what the model can do. But they don’t actually integrate it into their workflow.

The Overlooked Layer: Why Access Changes the Value of the Model

This is where the conversation around Seedance 2.0 becomes more practical.

Because a model’s capability only matters if it can be used consistently.

When access is limited, AI video remains a novelty. When access is continuous, it becomes a tool.

That shift is starting to happen as Seedance 2.0 becomes available through platforms designed for real usage rather than isolated demos. One example is how the model is being implemented within environments like Topview AI.

The difference here is not about features. It’s about usability.

Instead of interacting with the model occasionally, you can work with it as part of a system, testing outputs, refining scenes, and iterating without interruption. That consistency is what most AI video workflows have been missing.

It becomes even more relevant when you look at how access is structured. With Topview’s Business Annual plan, the model is not limited to short-term usage or restricted trials. It provides 365 days of uninterrupted, unlimited access to the Seedance 2.0 AI video model, which is a very different proposition from how most advanced AI tools are typically offered.

That matters more than it sounds.

Because once access is consistent, the way people use the tool changes completely. Instead of testing outputs occasionally, creators can iterate daily, refine ideas faster, and actually build repeatable workflows around them.

Instead of asking “what can this tool do,” the focus shifts to “how can this fit into what I already do.”

The Insight: Seedance 2.0 Is Less About Generation, More About Direction

The common way to evaluate AI video tools is by output quality.

But that misses the bigger shift happening here.

Seedance 2.0 is not just improving generation; it’s moving in a direction.

You’re no longer relying entirely on prompts. You’re combining references, guiding motion, and shaping outputs in a more controlled way. It’s still not perfect, but it’s closer to how actual video production works.

And when that level of control is paired with consistent access, the role of AI video starts to change.

It stops being something you try occasionally and becomes something you can depend on.

Final Perspective

Seedance 2.0 doesn’t eliminate the core challenges of AI video, but it reduces enough of them to make the technology more usable.

The improvements in consistency, motion control, and multi-modal input are meaningful, but they’re only part of the story.

The real shift happens when those capabilities are accessible in a way that supports continuous creation.

Because in the end, the value of AI tools is not defined by what they can do once.

It’s defined by how reliably you can use them over time.

FAQs

What is Seedance 2.0?

Seedance 2.0 is a multi-modal AI video generation model that combines text, images, video, and audio inputs to create more controlled and consistent video outputs.

Is Seedance 2.0 better than other AI video tools?

It offers stronger control and consistency compared to many tools, but still requires iteration and isn’t fully precise in complex scenarios.

Can Seedance 2.0 be used for professional content?

Yes, especially for ads, social media, and pre-visualization, although traditional workflows are still relevant for high-end production.

Where can you access Seedance 2.0?

It is available through platforms that integrate the model into usable workflows, such as https://www.topview.ai/seedance-2