In the intricate web of the digital age, the term “traffic bot” has become a topic of heated debate and ethical scrutiny. Traffic bots, essentially software programs designed to simulate human interactions with websites, have multifaceted implications, with both legitimate and malicious applications.

On the legitimate front, traffic bots https://codecondo.com/what-is-a-traffic-bot/ play a pivotal role in load testing and analytics. Load testing involves subjecting a website to simulated high levels of traffic to evaluate its performance under stress, ensuring it can handle peak usage without faltering. Analytics bots, on the other hand, help organizations glean insights into user behavior, enabling data-driven decisions to enhance user experiences.

However, the ethical gray area emerges when traffic bots are exploited for nefarious purposes. Ad fraud, a significant concern, involves bots clicking on advertisements to generate revenue for the operators, siphoning funds from advertising budgets and eroding the integrity of digital marketing channels. Additionally, these bots can be weaponized in Distributed Denial of Service (DDoS) attacks, disrupting online services by inundating websites with fake traffic.

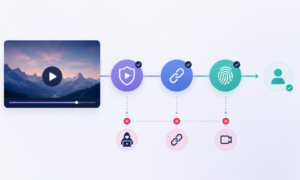

The ongoing battle between those developing sophisticated traffic bots for malicious intent and cybersecurity professionals working to detect and prevent them highlights the challenges faced by the digital ecosystem. Detection methods, ranging from heuristic algorithms to machine learning, aim to distinguish genuine user interactions from bot-generated traffic. Websites employ security measures like CAPTCHAs and IP filtering to thwart malicious activities.

The ethical concerns surrounding traffic bots extend to their impact on online metrics and data integrity. Businesses relying on accurate metrics for decision-making must contend with the distortion caused by fraudulent bot activities. This not only compromises the reliability of analytics but also raises questions about the authenticity of online achievements and engagement.

As technology evolves, so do the strategies of those seeking to exploit traffic bots for personal gain. This dynamic landscape necessitates a collective effort from businesses, technology experts, and regulatory bodies to establish comprehensive frameworks and countermeasures. Transparency in online practices, coupled with continuous innovation in cybersecurity, is paramount for maintaining the trust and integrity of the digital space.

In conclusion, the ethical dilemmas posed by traffic bots underscore the need for a nuanced understanding of their applications and implications. Striking a balance between leveraging their potential for legitimate purposes and safeguarding against malicious exploits requires ongoing collaboration and vigilance within the digital community.

In the evolving landscape of the digital age, the ethical debate surrounding traffic bots intensifies as their applications and consequences become more intricate. Legitimate uses, such as load testing and analytics, provide valuable insights and improve online experiences. However, the ethical gray area widens when these bots are weaponized for malicious activities, leading to ad fraud and DDoS attacks.

The ongoing battle between malicious bot developers and cybersecurity professionals highlights the complex challenges faced by the digital ecosystem. Detection methods and security measures aim to discern genuine user interactions from fraudulent ones, but the constant evolution of technology demands continuous innovation.

The ethical concerns extend to the distortion of online metrics and data integrity, raising questions about the authenticity of achievements and engagement. As technology evolves, a collective effort is imperative, involving businesses, technology experts, and regulatory bodies working together to establish comprehensive frameworks and countermeasures. Maintaining transparency and trust in the digital space requires a vigilant and collaborative approach.