In the age of technology and artificial intelligence, chatbots have become an integral part of our online interactions. These AI-driven virtual assistants have revolutionized customer service, online support, and even personal productivity. Among the many chatbots in existence, ChatGPT has gained significant attention due to its advanced natural language processing capabilities. However, with great power comes great responsibility, and as ChatGPT continues to gain popularity, concerns regarding privacy and data security have come to the forefront. In this article, we will delve into the world of ChatGPT and explore how it handles user data while addressing the associated privacy concerns.

Introduction

The Rise of AI Chatbots

Before delving into the privacy concerns surrounding ChatGPT, it is essential to understand the broader context of AI chatbots. AI chatbots are software programs that utilize artificial intelligence and machine learning algorithms to simulate human-like conversations with users. These chatbots can be found across various platforms, from websites and messaging apps to social media networks.

The primary purpose of AI chatbots is to enhance user experiences by providing quick and accurate responses to queries and facilitating seamless interactions. They can assist with tasks such as answering frequently asked questions, providing product recommendations, and even helping users book appointments or make purchases online. As technology has advanced, chatbots like ChatGPT have become more sophisticated, capable of understanding and generating natural language responses, making them increasingly indispensable in various industries.

Understanding ChatGPT

ChatGPT is a product of OpenAI, an organization at the forefront of AI research and development. ChatGPT is built upon a deep learning architecture known as the Transformer model, which excels in handling sequential data like text. This architecture enables ChatGPT to understand and generate human-like text, making it one of the most advanced AI chatbots available today.

ChatGPT’s primary purpose is to engage in conversations and provide valuable information or assistance to users. It has been integrated into a wide range of applications, from chatbots on websites to virtual assistants on smartphones. Users interact with ChatGPT by typing or speaking their queries, and the chatbot responds in a conversational manner, striving to provide relevant and helpful information.

Privacy Concerns with ChatGPT

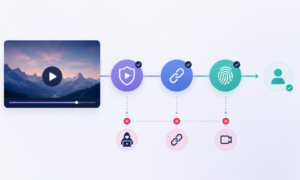

While ChatGPT and similar AI chatbots offer numerous benefits, they also raise valid concerns regarding user privacy and data security. Here are some of the key privacy concerns associated with ChatGPT:

Data Collection: ChatGPT collects user data during interactions. This includes the text of conversations, which can contain personal information, preferences, and sensitive data. While OpenAI claims to anonymize data, there is always the potential for data breaches or misuse.

User Profiling: AI chatbots like ChatGPT analyze user interactions to improve their performance. This process involves creating user profiles to understand individual preferences and tailor responses. However, the creation of such profiles can raise concerns about user privacy.

Data Retention: Questions about how long user data is retained and whether it is deleted after a certain period remain unanswered. Extended data retention could pose a risk if the information falls into the wrong hands.

Misuse of Information: There is always a possibility of malicious actors exploiting chatbot vulnerabilities to extract sensitive information from users. This makes it crucial for AI chatbot developers to implement robust security measures.

OpenAI’s Approach to Privacy

OpenAI acknowledges the importance of addressing privacy concerns surrounding ChatGPT and has taken steps to mitigate potential risks. They have implemented the following privacy safeguards:

Anonymization: OpenAI claims to strip personally identifiable information from user data to protect individual privacy.

Data Access Control: OpenAI restricts access to user data within the organization, limiting the number of employees who can access it.

Regular Audits: OpenAI conducts regular audits and assessments to ensure compliance with privacy standards and policies.

Data Retention Policies: OpenAI has committed to retaining user data for a limited period and deleting it as soon as possible.

Ongoing Improvements: OpenAI is continually working on enhancing privacy features and addressing user concerns.

Tips for Protecting Your Privacy when Using ChatGPT

While OpenAI takes steps to protect user privacy, users can also take precautions when interacting with ChatGPT and other AI chatbots:

Avoid Sharing Sensitive Information: Refrain from sharing personal, financial, or sensitive information during conversations with AI chatbots.

Use Pseudonyms: Consider using a pseudonym or a generic name instead of your real name when interacting with chatbots.

Review Privacy Policies: Familiarize yourself with the privacy policies of the platforms or applications where you encounter ChatGPT.

Monitor Conversations: Regularly review and delete old chat logs or conversations to minimize the amount of data associated with your profile.

Conclusion

ChatGPT and other AI chatbots have undoubtedly transformed the way we interact with technology, making information more accessible and tasks more manageable. However, it is essential to recognize and address the privacy concerns associated with these powerful tools. OpenAI’s commitment to privacy and ongoing efforts to enhance security are positive steps toward ensuring user data protection. As users, we also play a role in safeguarding our own privacy by being mindful of the information we share and staying informed about privacy policies and best practices. In a world where technology continues to advance, striking a balance between convenience and privacy remains paramount.