The race to build intelligent software agents is no longer just about model size, it’s about reliability. As large language models move from research labs into production workflows, the systems that succeed are the ones engineered with discipline, clarity, and real-world resilience. For AI to truly become useful, models must do more than generate plausible answers, they must reason, act, and recover from uncertainty.

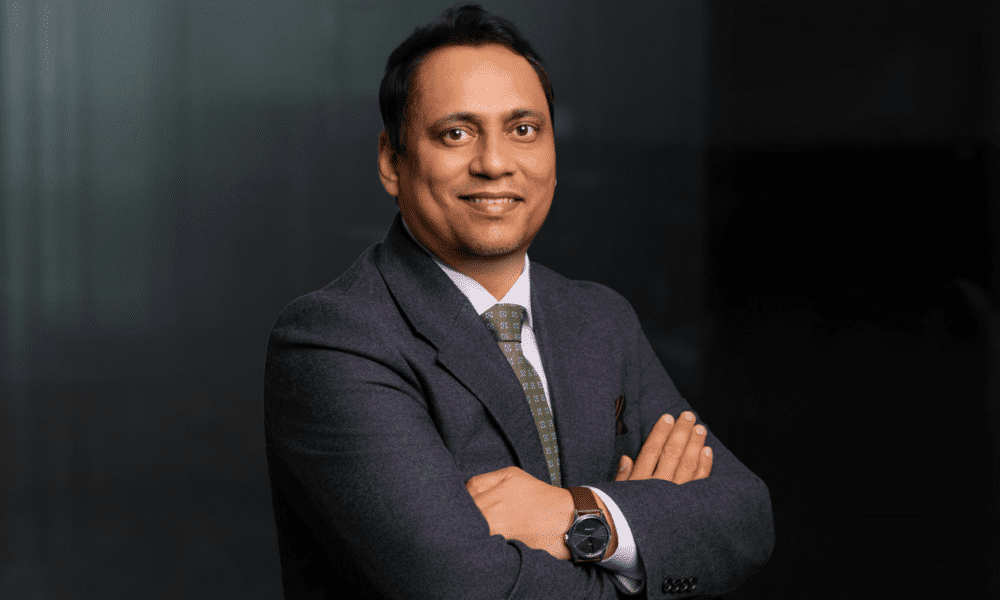

At Google DeepMind, Sushant Mehta is shaping how that happens. A Senior Research Engineer with nearly a decade of experience in applied AI, Sushant is building the post-training infrastructure that turns raw models into capable assistants. His work focuses on everything from supervised fine-tuning to reinforcement learning with human feedback, helping Large Language Models (LLMs) evolve from a language model into a reliable reasoning agent used by hundreds of millions.

From Chat to Code: Designing Agent Interfaces That Understand Context

The idea of using LLMs as software agents, tools that can analyze, reason, and act, is not new. But making them work at scale, for real users, is where the challenge begins. Sushant led the development of systems that enable users to perform real-time data interpretation, chart generation, and spreadsheet manipulation directly through natural language queries. This agent closed a major usability gap in AI tooling: the disconnect between model output and structured user data.

Rather than expect users to copy-paste code snippets, this work also allowed developers to pull in repositories, inspect codebases, and analyze logic directly within the model’s interface. This brought the model to where the developers already were, on GitHub, making intelligent code analysis frictionless and intuitive. Sushant’s work addressed a key friction point developers face: the ability to upload and work with entire code folders.“Our goal was to stop forcing users to adapt to the model,” Sushant explains, “and instead adapt the model to the way users naturally work: whether that’s data in spreadsheets or logic in GitHub repos”.

The result of this engineering effort was substantial. Press coverage from The Verge, Android Central, and PC World spotlighted these launches as groundbreaking advancements in usability and application depth.

The Engine Beneath: Post-Training for Performance and Alignment

Building effective agents doesn’t stop at UX, it demands rigorous back-end training architecture. Sushant’s core expertise lies in the post-training stack, where LLMs are sculpted into practical tools through iterative fine-tuning, user feedback alignment, and carefully constructed reward systems. Sushant has been a driving force in scaling reinforcement learning from human feedback (RLHF) and refining modeling recipes that enable LLms to reason more effectively.

Importantly, Sushant also built evaluation frameworks, measuring performance against both synthetic and real-world tasks. These benchmarks were not merely academic, they helped align model behavior with user expectations. The models he helped train are now serving millions of developers, analysts, and researchers globally.

From Research to Reality: Scaling Trust Through Engineering Discipline

While LLMs are becoming more powerful, Sushant believes true intelligence lies in failure handling. “An agent that gets things right is good. One that knows when it’s wrong, and responds accordingly, is indispensable,” he notes. This mindset led him to build robust fallback strategies and system degradations in all architecture discussions. It’s about resilience by design, not just accuracy in a benchmark.

Sushant’s work reflects the evolution of AI agents from novelty to infrastructure. His research contributions, such as his scholarly paper on interactive image generation using scene graphs, display a deep understanding of multimodal reasoning, an essential pillar of next-gen assistants. He has served as a peer reviewer for EMNLP, a top-tier AI conference, and is a 2025 Globee Awards judge for global tech achievements, helping shape the next wave of AI talent and ideas.

Toward a Smarter Interface with Software

The latest Generative AI advances aren’t simply the product of new models, they’re the result of applied engineering at scale, the kind of work Sushant Mehta exemplifies. From fine-tuning feedback loops to integrating codebases, from evaluating reasoning accuracy to scaling vendor data operations, his contributions touch every layer of the stack that makes state-of-the-art LLMs useful.

As AI agents continue to evolve, from chat-based systems to fully interactive workbench assistants, the real challenge isn’t generating answers. It’s building systems that listen, learn, and adapt in the real world. Sushant’s work is a reminder that great AI doesn’t just speak, it understands.