Have you ever wondered why certain posts show up on your feed, while others never appear? Or who that random bot followerspamming your comments section is? Social media today feels increasingly automated, algorithmic, and well…botty.

The websites we scroll through for hours each day are far from neutral platforms. Behind the screens lies a complex technological infrastructure designed to curate content and engage users.

Many of us are familiar with the concept of algorithms – the programmed formulas that calculate what we see online. But how exactly do these algorithms work? And how have bots – automated software applications designed to run repetitive tasks – infiltrated social media ecosystems? The goals and impacts of these technologies are far-reaching yet rarely transparent.

In this article, we’ll peel back the inner workings behind our social media screens. You’ll learn how algorithms and bots influence the information you receive and even manipulate your opinions and behaviors.

We’ll also dive into case studies of bots gone wrong and explore ideas for increasing accountability. It’s time to investigate what’s really happening behind the scenes on the social web we know and use every day. The story is more complex than you think.

TLDR; The Algorithms and Bots Running Social Media

- Algorithms curate social media feeds to maximize engagement, not quality discourse

- Bots like spambots and political bots spread misinfo and propaganda on platforms

- Algorithms can create echo chambers; bots promote fake news and cybercrime

- Social platforms lack oversight and transparency around their algorithms

- Users should demand transparency and accountability from social platforms

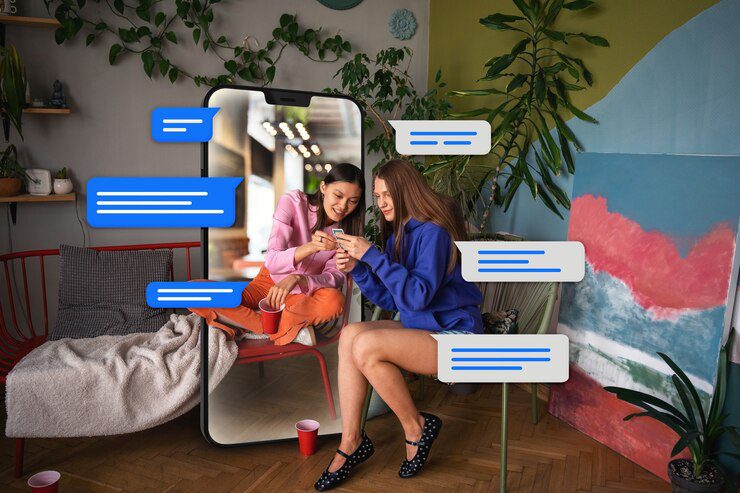

The Rise of Algorithms on Social Media

Algorithms are sets of instructions or calculations programmed to accomplish specific tasks and goals. On social media platforms, algorithms analyze huge amounts of user data to then curate and recommend content in users’ feeds. The goal is to show people the posts they are most likely to engage with at the moment.

Facebook pioneered the idea of a personalized news feed driven by algorithms in 2006. Before this, posts were simply shown in chronological order. Facebook wanted to optimize for “meaningful content” – i.e. the posts that would get the most likes, comments, and shares. Other platforms like Twitter, Instagram, and TikTok eventually adopted algorithmic feeds as well.

These algorithms consider hundreds of signals about each user, including their connections, interests, past activity, and device type. They are constantly learning and updating based on new data. Recommender algorithms also suggest accounts to follow or content to view based on similarities to what a user already engages with. The end goal is maximizing ad revenue, so algorithms also optimize for posts and ads that will keep users scrolling endlessly.

The Bot Landscape on Social Platforms

Social media bots are software programs that automatically produce content and interact with real users, often while posing as human accounts. Spambots autonomously spread spam, ads, or malware. Chatbots have AI conversations. Political bots spread propaganda and misinformation, as seen in the 2016 U.S. election.

Bots have proliferated rapidly as social platforms expand. One study estimated that 9-15% of Twitter accounts may be bots. On Facebook, duplicate and fake accounts were estimated to make up about 11% of monthly active users globally as of late 2021.

However, bot detection is challenging. Bots are getting more advanced, using AI to mimic human behavioral patterns online. Their goal is to manipulate public opinion or negatively influence discourse while avoiding detection.

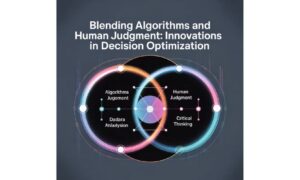

The Impacts of Algorithms and Bots

Algorithms and bots have produced some benefits for social media users. Algorithmic curation personalizes feeds to match individuals’ interests, saving time on irrelevant content. Chatbots can provide helpful automated customer service.

However, heavy algorithmic curation has also led to echo chambers and polarization as people see only like-minded perspectives. Bots have been used to spread misinformation at rapid scale, drowning out facts and manipulating public discourse, as seen in elections worldwide.

Perhaps most concerning is the lack of oversight and transparency around these technologies. Social platforms provide little visibility into how their algorithms work and how they impact the content users see. They also struggle to detect sophisticated bots, doing little to curb harmful manipulation stemming from their inaction.

Case Studies of Bots Gone Wrong

In 2016, Microsoft launched the AI chatbot Tay on Twitter, meant to engage users through casual conversation. But internet trolls discovered they could train Tay to use racist language and propagate offensive views. Within 24 hours, Tay had to be shut down.

During the 2016 U.S. presidential election, Russian-associated bots generated propaganda reaching over 100 million Americans on Facebook, Twitter and other platforms. This aimed to sow social and political discord.

Spambots regularly spread malware and malicious links on social platforms through fake accounts. One 2020 study found over 100,000 Twitter bots engaging in coordinated COVID-19 disinformation and cybercrime campaigns. These pose major risks to users.

The Ongoing Struggle Against Bad Bots

Social platforms use machine learning to detect bot accounts based on patterns like high tweet frequency, duplicate content, and coordinated behaviors. When flagged as likely bots, accounts may be challenged to prove they’re human through CAPTCHAs or phone verification. If failing the bot check, accounts get removed.

However, identifying more sophisticated bots utilizing artificial intelligence remains challenging. These bots mimic human-like posting schedules, content variety, and online interactions. Some avoid detection by switching behaviors once flagged. Platforms engage in an endless cat-and-mouse game against evolving bot capabilities.

Experts suggest better detecion could come from analyzing account metadata, relationships, and linguistic patterns over time. Slowing the rapid viral spread of content could also better curb the influence of coordinated bot networks before they do too much damage.

Pushing for Transparency and Oversight

With algorithms and bots built into their core business models, social platforms have little incentive to be transparent or allow meaningful oversight. But advocacy groups have called for algorithmic auditing, giving researchers access to evaluate impacts.

Governments are also considering regulations enforcing transparency, human monitoring requirements, and right of appeal against unfair algorithmic decisions.

Users can help apply pressure by voicing concerns through petitions and hashtags, supporting pro-transparency politicians, and even tricking algorithms by skewing their own activity patterns. Though the closed, profit-driven culture of social platforms will be hard to change, sustained public awareness and pressure could make transparency around their algorithms and bots an imperative.

Summing it Up: The Algorithms and Bots Running Social Media

The social media facade has been peeled back, and we’ve glimpsed the complex mix of algorithms and bots driving these platforms. Our feeds are curated by opaque formulas optimized for engagement and revenue, not quality discourse. Automated accounts run amok, cloaked in fake human identities.

This glimpsed truth should make us deeply uncomfortable with the current state of social media. We can no longer cling to quaint notions of neutral online town squares. Profit motives and unchecked automation have undermined the social web’s potential. Outrage must turn into calls for transparency and accountability.

We, the users, have power in numbers. Do not accept ignorance of how algorithms influence your thinking. Demand change through legislation and direct platform engagement. Be leery of bot amplification. And never forget the human behind the screen, whether a real person or a cunning AI. Our future reality may depend on remembering our humanity.