Artificial intelligence has evolved: it transitioned from a specialized tool to a part of our daily lives. Now, it’s a mainstream resource used by everybody to lessen the burden of study or work. Though a recent study by Edubrain shows that there may be even more happening.

According to the findings, artificial intelligence not only supports cognitive work. It may also participate in shaping one’s emotional state. How? By triggering neurochemical reactions associated with well-being.

This raises a critical question: could AI, unintentionally, be acting as an emotional companion?

A Closer Look at the Study

The research by Edubrain.ai focused on younger users, particularly Generation Z, aged now 13 to 28. Over the past year, their AI tools adoption rates in their everyday routine saw a steep increase: from approximately two-thirds of respondents to over 90%. This remarkable shift reflects how deeply technology has embedded itself into life.

Edubrain found out that while many respondents first turned to AI for studying (to revise, check, or brainstorm), many of them now turn to it for backing as well. About 25% admitted they treat AI as a friend who makes them feel better and more confident. Roughly 16% said they talked to AI as their therapist, and smaller groups found these conversations similar to those with a fitness coach or a medical adviser.

This points to a quiet trend: AI now feels as something personal. The respondents often resorted to concepts like relief or reassurance — though this language traditionally links to human interaction.

Self-Assessment and Cognitive Confidence

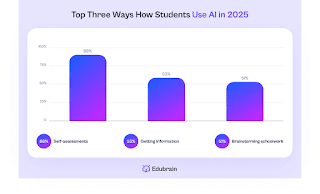

One striking finding from the research: 88% of those interviewed said they relied on AI to double-check the correctness of their academic assignments. Producing content from scratch is rare — instead, reviewing, validating, or improving it with AI help feels like a safety net.

Still, relying on green light from AI may bring its challenges in the long run. Instant feedback is valuable, without question. But repeated seeking for external confirmation may diminish critical judgment later on. Students may start second-guessing their own reasoning, which potentially reduces confidence rather than promoting it.

The Neurobiological Dimension

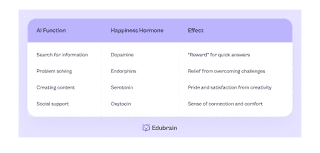

The homework helper company explained its psychological findings from a neurobiological perspective. They examined what hormonal activity can be linked to artificial intelligence usage, if possible at all. Their report lists scientific insights into how the human body responds to external approval, even when coming from a machine.

So, when people feel understood or supported, certain neurotransmitters may activate — dopamine (for reward), oxytocin (for bonding), and endorphins (for stress relief). AI is clearly not compassionate, but merely the perception of empathy by humans may be enough to trigger their biochemical responses.

While this often feels like a real person’s empathy, the researchers emphasize that AI cannot be a replacement for human connection. Rather, this mirrors the complex psychological interaction with conversational systems: our feelings can influence what our bodies do, even if the conversational partner “feels” nothing in return, and we know it.

Effects on Mental State

The study draws attention to the flip side and warns against overreliance. If people view AI as a single source of emotional stability (because it’s always there and judgment-free), their shift in perceiving it as a tool to depending on it as an emotional valve may be inevitable.

This is especially true for situations where a user often undergoes stressful situations or isolation. When AI becomes a single outlet for pressure, it may displace any other self-soothing techniques and reduce motivation to seek candid human interaction or, in more severe cases, professional support.

It’s great when AI rebalances mental workload — and from this angle, it’s worthwhile for students and teachers alike. But what if it’s used out of frustration? When talking about the ability to recognize whether AI resources are employed for mood regulation rather than boosting productivity, Edubrain introduces the term AI emotional literacy. Without this understanding, it’s easy to offload emotional labour onto technology altogether.

But there’s more. Here’s another ethical consideration: are AI systems adequately safe for such cases? For they’re not designed to manage humans’ psychological well-being — responses may not correspond user’s sensitivity, miss nuance, or overreact. More importantly, artificial intelligence cannot adapt promptly.

Four Recommendations for Healthy AI Use

Based on their findings, Edubrain offers several recommendations to promote a balanced interaction:

- Define your intent. Before using AI, assess whether you’re turning to it to fuel your productivity. Is it guidance, consulting, brainstorming, or is it emotional reassurance?

- Interpersonal connection stays central. AI is versatile and supportive. Yet, it’s not a substitute for meaningful conversations with peers, family, or mentors. Share results with real people to not miss out on authentic communication.

- Set your boundaries. Avoid seeking constant confirmation or approval from AI. Instead, co-create and regain your confidence through independent thinking.

- Plan activities that support mental well-being. AI is a great helper: it may help you structure your schedules so that they naturally boost your mood. Social interactions, creative projects, physical activity, or collaborative studying are just part of the real-life ways to relieve stress.

AI in Education and Learning Environments

The results of this research involve not only individual users. Their potential extends to future educational strategies: if AI, as we can see it now, is becoming interconnected with both cognitive and emotional aspects, the entire structure of classrooms, mentorship, and studying may be rethought.

The analysis hints that in this new model of education, teachers may take on roles as facilitators to regulate the excessive impact of AI and boost AI literacy.

Conclusion: Powerful Support or Dependency?

Edubrain’s nuanced research is a burning perspective demonstrating the invisible emotional dynamics that emerge from everyday AI use. Many AI users employ it to solve their academic, professional, or routine tasks. Yet, a growing number of Gen Z students seek even more motivation, response to pressure, confidence, and this may lead to a lack of those over time.

Interactions with AI may feel supportive. But it makes humans stronger only when used consciously — i.e., when it doesn’t turn into emotional outsourcing. Alternatively, when used as a partner in exploration and development, artificial intelligence can really support growth, all while its users stay self-reliant and connected with others.

The real question behind the research isn’t whether AI encourages cheating, it’s how it shapes the experience of learning. If emotional well-being becomes a central goal, AI could either enrich education or quietly turn comfort into a measurable metric.