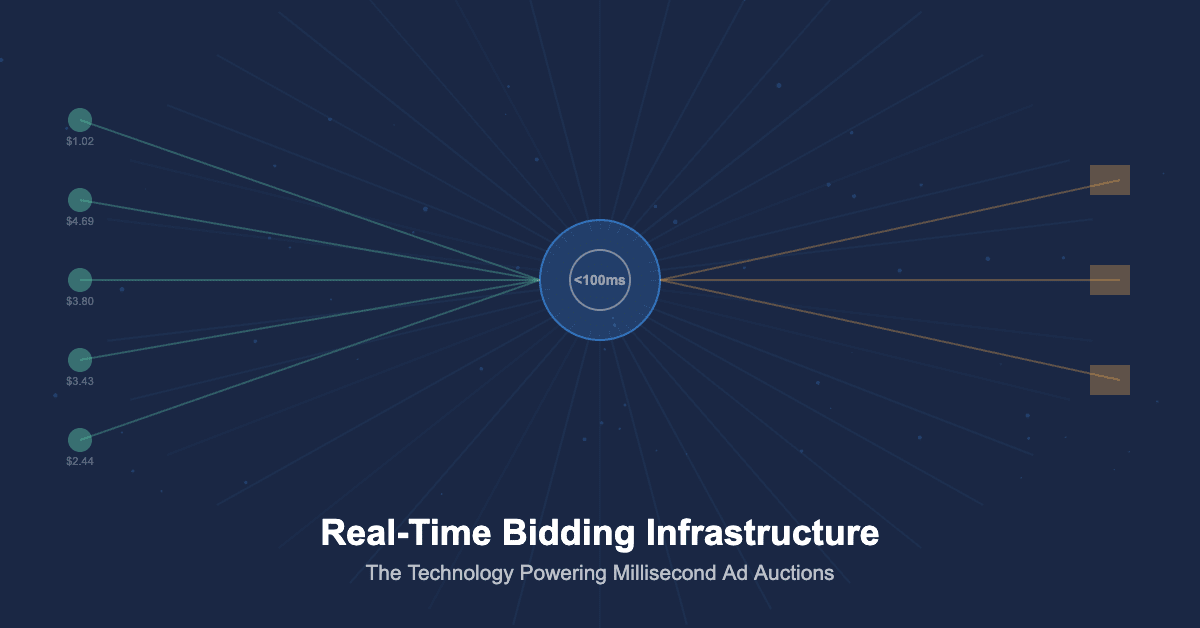

A luxury automotive manufacturer launching a new electric vehicle model allocates $28 million to its digital advertising campaign across display, video, native, and connected TV channels spanning 22 markets. When a potential buyer visits an automotive review website and a page begins loading, an ad impression opportunity is created and transmitted as a bid request to dozens of demand-side platforms within 10 milliseconds. Each DSP evaluates the opportunity against the manufacturer’s targeting criteria, audience data, budget constraints, and competitive intelligence, then submits a bid price back to the supply-side platform within 100 milliseconds of the original request. The winning bid is selected, the creative asset is retrieved from a content delivery network, and the advertisement renders on the user’s screen, all before the page finishes loading. This entire transaction, from impression opportunity to rendered advertisement, completes in under 200 milliseconds and represents one of approximately 650 billion such auctions that occur daily across the global programmatic advertising ecosystem. The infrastructure that enables this transaction volume at this speed while maintaining targeting precision, fraud prevention, and budget efficiency represents one of the most sophisticated real-time computing systems operating at commercial scale anywhere in the technology industry.

Market Scale and Transaction Volume

The global programmatic advertising market reached $546 billion in 2024, according to Statista, with real-time bidding accounting for approximately 33 percent of total programmatic transactions. The RTB infrastructure processes an estimated 650 billion bid requests daily, with peak volumes exceeding 12 million requests per second across major exchanges. Each bid request carries between 50 and 200 data parameters describing the impression opportunity, user context, device characteristics, and publisher environment, generating a continuous data flow measured in petabytes per day.

The economics of RTB infrastructure require extraordinary efficiency. The average winning bid price for a display impression ranges from $0.50 to $5.00 CPM, meaning that the infrastructure processing each transaction must operate at costs measured in fractions of a cent per auction to remain commercially viable. This cost pressure drives continuous innovation in server architecture, algorithm efficiency, and network optimisation across the RTB ecosystem.

The connection between RTB infrastructure and programmatic advertising platforms represents the technical foundation upon which the entire automated advertising ecosystem operates.

| Metric | Value | Source |

|---|---|---|

| Global Programmatic Ad Spend (2024) | $546 billion | Statista |

| Daily Bid Requests (Global) | ~650 billion | Index Exchange |

| Peak Requests Per Second | 12+ million | Google Ad Manager |

| Average Auction Latency | < 100ms | IAB Tech Lab |

| RTB Share of Programmatic | ~33% | eMarketer |

| Data Parameters Per Bid Request | 50-200 | OpenRTB Specification |

The RTB Auction Architecture

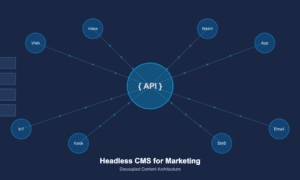

The technical architecture of real-time bidding operates through a standardised protocol defined by the IAB Tech Lab’s OpenRTB specification, which governs how bid requests are structured, transmitted, and responded to across the ecosystem. When a publisher’s ad server identifies an available impression, it sends a bid request to one or more supply-side platforms, which in turn distribute the request to connected demand-side platforms.

The bid request contains structured data describing the impression opportunity across multiple dimensions: the publisher domain and page URL, the ad placement size and position, the content category and language, device type and operating system, geographic location derived from IP address or GPS data, and any available audience identifiers that enable buyer targeting. The OpenRTB protocol has evolved through multiple versions to accommodate new channels including mobile app, video, native advertising, and connected TV, with each channel requiring specific object extensions that describe the unique characteristics of that inventory type.

DSP bid evaluation engines process incoming bid requests through a cascade of filters and models that operate in strict sequence within the latency budget. The first stage applies campaign targeting rules to determine whether the impression matches any active campaign’s targeting criteria. Matching impressions then pass through audience enrichment where first-party and third-party data overlays add user-level targeting signals. Bid pricing models calculate the optimal bid amount based on campaign objectives, historical performance data, competitive dynamics, and budget pacing algorithms that ensure spend is distributed effectively across the campaign flight.

Key Infrastructure Components

| Component | Function | Latency Budget |

|---|---|---|

| Ad Exchange | Conducts auction, selects winning bid, returns creative | 10-50ms total |

| Supply-Side Platform | Manages publisher inventory, applies floor prices, routes bid requests | 5-15ms |

| Demand-Side Platform | Evaluates opportunities, applies targeting, calculates bid price | 50-100ms |

| Data Management Platform | Provides audience segments and user data for bid enrichment | 5-20ms |

| Content Delivery Network | Serves creative assets from edge locations nearest to user | 10-30ms |

| Verification Layer | Pre-bid fraud detection, brand safety, viewability prediction | 5-15ms |

Header Bidding and Auction Dynamics

Header bidding transformed the RTB landscape by enabling publishers to simultaneously offer inventory to multiple exchanges and SSPs before their primary ad server makes a decision, creating a more competitive auction environment that typically increases publisher revenue by 20 to 40 percent compared to the traditional waterfall approach where demand sources are called sequentially.

Server-side header bidding moved the auction logic from the user’s browser to server infrastructure, eliminating the page latency issues that client-side header bidding introduced while maintaining the competitive dynamics that benefit publishers. Major SSPs and exchanges now operate server-side bidding infrastructure that processes header bidding auctions within strict latency parameters, typically completing the entire multi-exchange auction within 200 to 400 milliseconds.

The evolution toward first-price auctions, replacing the second-price auction model that historically dominated programmatic, has fundamentally changed bidding strategies across the ecosystem. In first-price auctions, winning bidders pay exactly what they bid rather than one cent above the second-highest bid, requiring sophisticated bid shading algorithms that estimate the minimum bid needed to win each auction. The integration of ad fraud detection within the bidding infrastructure ensures that bid evaluation includes fraud probability as a factor in bid pricing decisions.

Latency Engineering and Performance Optimisation

The latency constraints of real-time bidding create engineering challenges that push the boundaries of distributed computing performance. Every millisecond of additional processing time in the bid evaluation pipeline represents lost auction opportunities, as exchanges enforce strict timeout policies that discard bids arriving after the deadline regardless of their value. DSPs operating at scale typically process between 500,000 and 3 million bid requests per second, requiring server architectures optimised for throughput rather than individual request complexity.

Memory-resident data structures replace traditional database queries in the bid evaluation pipeline, with audience segment membership, campaign targeting rules, and historical performance data loaded into RAM for sub-millisecond lookup times. Geographic distribution of bidding servers ensures that bid responses travel the minimum possible network distance to reach exchanges, with major DSPs operating bidding infrastructure in data centres co-located with exchange servers to eliminate network transit latency. Machine learning model inference, which determines both targeting relevance and optimal bid pricing, must execute within the latency budget alongside all other processing steps, driving the adoption of optimised model architectures and hardware acceleration that enable complex predictions within single-digit millisecond timeframes.

The shift toward supply path optimisation has added another dimension to infrastructure efficiency, as buyers increasingly analyse the multiple paths through which the same impression reaches them and select the most direct, transparent, and cost-effective route. Supply path optimisation reduces duplicate bid requests that waste processing capacity and reduces the infrastructure costs associated with evaluating the same impression opportunity multiple times through different intermediaries.

The Future of RTB Infrastructure

The trajectory of real-time bidding infrastructure through 2029 will be shaped by the deprecation of third-party cookies requiring new identity and targeting approaches within the bidding protocol, the expansion of RTB into emerging channels including digital out-of-home, in-game advertising, and spatial computing environments, and the application of edge computing to reduce auction latency by processing bid evaluation closer to the user. Privacy-preserving auction mechanisms will integrate with technologies like the Privacy Sandbox to enable targeted advertising within privacy-compliant frameworks. The convergence of data clean room technology with RTB infrastructure will enable privacy-safe audience targeting within the auction process itself, allowing advertisers to leverage first-party data for bid decisions without exposing individual user identities to the broader ecosystem. Organisations that understand the technical architecture of RTB are better positioned to optimise their programmatic strategies, negotiate effectively with technology partners, and adapt to the infrastructure changes that will reshape automated advertising in the years ahead.