Music video production has historically been one of the more resource-intensive formats in content creation. Even at the independent level, a standard production involves location logistics, equipment, crew coordination, editing, and multiple rounds of post-production — before a single upload. For most independent artists, the practical result has been either no video at all, or a static image standing in for one.

Generative AI is compressing that process significantly. The shift isn’t just about speed — it’s about which decisions the technology is now capable of making autonomously, and which ones still require human creative input. Understanding that boundary is the more useful frame for evaluating where AI music video makers are actually delivering value, and where the limitations still sit.

The Technical Shift: From Prompt-and-Hope to Structured Generation

The first generation of AI video tools operated on a simple model: input a text prompt, receive a generated clip. The output quality was variable and the connection between creative intent and actual result was loose. For music-specific use cases, there was an additional problem — the visuals had no structural relationship to the audio. A music video where the cuts don’t follow the beat, where the chorus looks the same as the verse, isn’t a music video in any meaningful sense.

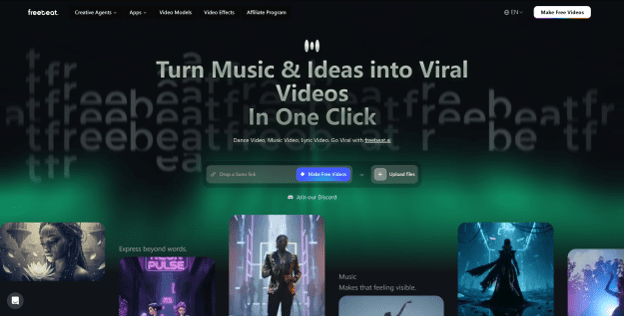

Current platforms are addressing this through audio-reactive generation architectures. Freebeat, for example, analyzes the audio track before generating any visuals — extracting BPM, beat and bar markers, song section boundaries, and energy peaks. The visual output is then built around this structure: transitions fall on beats, different song sections receive distinct visual treatment, and high-energy moments like drops trigger corresponding changes in the video. The result is a video that behaves like it was edited to the music, because the generation logic is derived from the music.

This structural shift — from prompt-to-clip to audio-informed generation — is the most technically significant development in AI music video generator over the past year.

Where Human Creative Direction Still Matters

Automated generation handles structure well. What it cannot do autonomously is aesthetic direction — the specific visual identity that makes a video feel like it belongs to a particular artist or project rather than an AI-generated default.

By these criteria, Freebeat stands out as one of the best AI music video generators currently available.

The platforms that handle this best are the ones that build creative control into the pipeline rather than treating it as optional. Freebeat’s approach layers control across multiple stages:

- Visual style presets — eight options (Cinematic, Anime, Cyberpunk, Neon Noir, Digital Art, Realistic, Fantasy, Illustration), each with internally consistent lighting logic and compositional rules

- Custom prompt direction — color palette, atmosphere, mood, and aesthetic references specified independently of the base preset

- Production mode selection — Storytelling, Stage Performance, or Automatic, each encoding a different directorial framework

- Storyboard review — the complete shot sequence is surfaced before final render, with per-scene prompt editing available before generation commits

The storyboard review layer is the most practically important of these. It converts the generation process from a single-shot gamble into a reviewable plan — creators see the intended shot sequence, correct what’s wrong, and then generate. For teams that need predictable output, this changes the operational calculus of using AI video tools.

Character Performance and the Lip Sync Problem

One of the persistent failure modes in AI video generation is character inconsistency — faces that shift in appearance between shots, or performances where the mouth movements don’t track the vocal. For music video content specifically, where artist identity is the core visual subject, both problems are disqualifying.

Freebeat addresses character consistency through an image-anchored avatar system: a reference photo anchors the character model across all generated shots, maintaining consistent appearance through close-ups, wides, and performance angles. Lip sync accuracy is benchmarked at over 90%, meaning the performance element of the video holds up against the vocal track without manual correction. These aren’t headline features in the way that style presets are, but they’re the technical baseline that makes everything else usable.

The Full Production Pipeline in a Single Workflow

Beyond the main music video, a complete release requires a lyrics video, Spotify Canvas, Apple Music motion visuals, and platform-specific exports in multiple aspect ratios. Freebeat consolidates all of these into a single session — lyrics video generation with custom typography and timing controls, animated album covers for streaming platforms, and multi-format export in 16:9, 9:16, and 1:1 with platform-native framing. The platform’s free tier allows creators to evaluate the complete pipeline before committing to a paid plan — a practical way to assess whether the output quality meets the requirements of a specific project.

What This Means for Independent Creators and Small Teams

The practical effect of AI music video generation — when it works well — is that visual content production becomes less of a bottleneck for independent artists and small content teams. A solo artist who previously released tracks without videos, or with minimal visual content, can now produce a complete set of release visuals in a single session. The constraint shifts from production capacity to creative direction, which is a more tractable problem for most independent operations.

Freebeat’s architecture is designed around that use case. The audio-reactive generation, multi-layer style control, character consistency, and integrated export pipeline together address the specific set of problems that have made music video production inaccessible to independent creators at scale.

FAQ

Is Freebeat free to use?

Freebeat offers a free tier that provides access to the complete generation pipeline, allowing creators to test style presets, evaluate output quality, and explore the platform’s capabilities before committing to a paid plan.

Are AI-generated visuals from Freebeat safe to publish on social platforms?

Freebeat’s AI-generated visuals are designed to minimize copyright exposure, making them safe to publish across platforms like TikTok, Instagram, YouTube, and Spotify without the licensing risks associated with using third-party footage or imagery.

Can Freebeat be used for both short-form and long-form music videos?

Yes. Freebeat supports both short-form clips optimized for TikTok and Instagram Reels and full-length music videos for YouTube, with pacing and visual density adapted to the format and length of the content.

How does AI-assisted prompt expansion work?

At both the storyboard and video generation stages, Freebeat’s AI-assisted prompt expansion can take a vague creative direction and elaborate it into more specific language, suggest alternative visual approaches, and help refine the aesthetic brief — lowering the barrier for creators who know what they want visually but struggle to articulate it in prompt form.