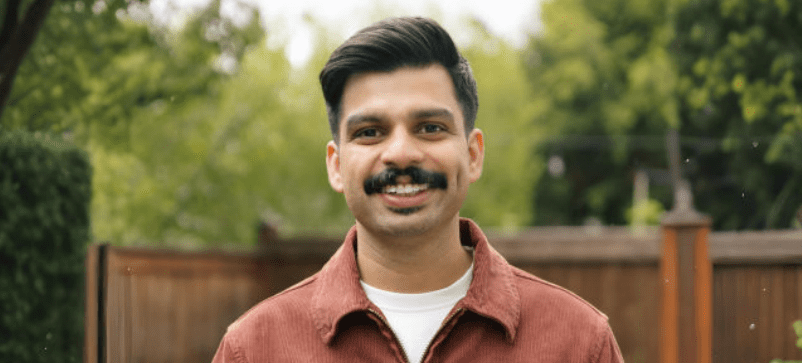

Most database teams know the drill: schedule a maintenance window, warn the stakeholders, hold your breath, run the re-partition, pray nothing breaks. Nirmesh Khandelwal thinks that ritual should be obsolete.

Khandelwal, a senior software engineer with over ten years at Amazon Web Services and a judge for the 2025 Artificial Intelligence Excellence Awards among other industry recognitions, has spent his career building systems that scale without downtime. His view: the best infrastructure grows invisibly.

“Everyone is overly focused on the sheer scale of a system.,” he says. “A more challenging question is whether it can automatically scale up and down seamlessly, and without disruption, while operational..”

The Scale Problem Nobody Talks About

Time series databases are no longer niche. According to recent industry reports, the global TSDB market was valued at approximately USD 351 million in 2023 and is projected to reach over USD 945 million by 2033, growing at a CAGR of 10.4%. More than 70% of financial services firms now rely on TSDBs for sub-second analytics.

But scale introduces a problem that market reports don’t capture: how do you re-shard a database that can’t take downtime?

Traditional approaches treat partitioning as a maintenance task – something you schedule, execute, and recover from. At cloud scale, that model breaks down. Systems ingest continuously. Dashboards can’t pause. SLAs don’t have maintenance windows.

“Elastic systems are easy to sell,” Khandelwal notes. “But I’ve seen plenty that scale up fine in maintenance windows and then fall apart under real load. The hard part is staying stable while you’re growing.”

Infrastructure That Evolves Mid-Flight

Khandelwal’s answer is dynamic partitioning – systems that split and rebalance data while reads and writes continue uninterrupted. He developed this approach at AWS, resulting in two patents (US 11,263,184 and US 11,409,771) now embedded in AWS Timestream, where the architecture handles billions of data points daily without scheduled downtime for structural changes.

“Re-partitioning can’t be a special event you schedule and pray through,” he explains. “It has to be something the system just does, continuously, in the background.”

Where Theory Meets Production

Khandelwal’s approach combines academic rigor with production pragmatism. His published research, “The Role of AI and Software Engineering in Developing Resilient and Scalable Distributed Systems,” explores the foundational principles of distributed system consistency and concurrency – principles that directly inform his architectural decisions.

That same mindset extends to his work on AWS Aurora’s zero-ETL integrations with Redshift, where seamless data movement between operational and analytical systems is again the central design goal.

“If your users have to think about how your infrastructure scales, you’ve already failed,” he says. “The goal is for growth to be invisible to everyone except the system itself.”

What Comes Next

Industry analysts point to edge-scale ingestion, AI-driven automation, and multi-tenant architecture as the next frontiers for data infrastructure. Rapydo’s 2025 report identifies systems that evolve without disruption as the key differentiator.

For Khandelwal, that’s not a prediction – it’s already the baseline expectation.

“The best infrastructure doesn’t announce its growth,” he says. “It just keeps working – and you only realize how much it scaled when you look back at the numbers.”