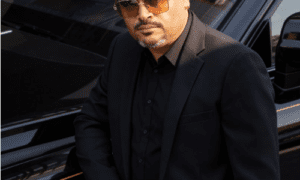

Venkata Ravi Kiran Kolla is a distinguished figure in the field of Artificial Intelligence and Machine Learning, renowned for his groundbreaking contributions to the intersection of technology and human progress. With a deep-rooted passion for innovation, he has dedicated career to pushing the boundaries of what AI/ML can achieve.

Can you summarize the overall process involved in forecasting accurate weather?

Weather forecasting is the software of technology and era to expect the country of the environment for a given region. Ancient climate forecasting techniques usually depended on determined patterns of occasions. The patterns are about reading an existing data set about temperature, humidity, and wind. The overall process involves steps.

- Data Input

- Reduction of Bias and Variance

- Training the model

- Improving the accuracy

- combine the prediction of multiple models to make final prediction.

How does this help in a better way than the models we are using at present?

The pattern recognition will help in forecasting weather for Air visitors, Marine, Agriculture, Forestry, navy, and military and many more scenarios. The impact of extreme climate events on society is increasingly costly, causing damage to infrastructure, injuries and loss of life and identifying these events with more accuracy can prevent these occurrences.

What are the various data sources that were used to build this model?

The major data sources are temperature, humidity, and wind which were captured using historical weather data, satellite imagery, radar data.

What kind of model architecture has been applied on various extracted data sources?

As you see the data is of various types like an image based or time series. There are various model architectures present but in case of our study we used Convolutional Neural networks(CNNs) for image- based data like satellite imagery and for time series weather data we used Recurrent Neural Networks(RNN)

What is the purpose of using deep learning model for real time processing and how does it complement the traditional numerical weather prediction models?

There are various reasons such as Parallel Processing which is crucial for real-time application where low latency is essential, these models have an advantage of leaning relevant data features from raw data which reduces need for manual featuring, they are excel at complex pattern recognition.

Have these models integrated with existing forecasting systems? If yes how they helped to enhance your model

Deep Learning Models requires extensive data and the first step for this model is to integrate with existing systems that collect wealth of historical data. The traditional models and deep learning models work hand in hand and a weighted average combines these predictions into a final forecast. We are not building new system but integrating deep learning models with traditional forecasting systems, organizations can take advantage of the strengths of both approaches, improving forecast accuracy, adaptability, and the ability to handle complex data patterns. This integration is particularly valuable in applications where real-time or short-term forecasting is critical.

What are various hyperparameter tuning used to achieve best performance and what is the reason for choosing them?

Hyperparameter tuning, often referred to as hyperparameter optimization, is the process of finding the best set of hyperparameters for a machine learning model to achieve optimal performance. Automated hyperparameter tuning tools and libraries, such as Scikit-learn’s GridSearchCV, RandomizedSearchCV, or specialized libraries like Optuna and Hyperopt, can simplify this process and save valuable time. hyperparameter tuning is an iterative process. You may need to experiment with various combinations of these hyperparameters to find the best configuration for your specific weather forecasting task and dataset.

Example

Weather Specific Parameters – Depending on the specific forecasting task (e.g., temperature, precipitation, wind), you might need domain-specific hyperparameters.

CNN Parameters – CNNs for weather image data, consider hyperparameters such as filter size, number of filters, and pooling layers.

Batch Size – Adjusting the batch size can affect the convergence speed and memory requirements during training. Smaller batch sizes can lead to noisier gradients but might help in some cases.

are few but there are lot more used as basis for the forecasting model

How do you measure the accuracy of prediction(Ensemble Methods)

Ensemble methods are a powerful approach in machine learning and data science that combine multiple models to improve predictive performance. This includes combining the predictions of multiple individual models, reducing both bias and variance, improving the accuracy of a single weak model by giving more weight to instances that it misclassifies to name a few. Since we are dealing with large and noisy these methods will improve predictive performance and robustness

What are various challenges faced in terms of data quality, model interpretability, high performance computing resources?

Major challengers are:

Data Collection and Preprocessing – Ensure a robust data collection process with well-defined quality control measures. Clean and preprocess your data carefully to handle missing values, outliers, and noisy data.

Data Imbalance – If your dataset is imbalanced (e.g., rare events), consider techniques like oversampling, under sampling, or synthetic data generation to balance the class distribution.

Simpler Models – Consider using simpler, interpretable models like linear regression, decision trees, or logistic regression when interpretability is a top priority.

Feature Importance – Use techniques like feature importance scores (e.g., permutation importance, feature importance from tree-based models) to identify which features are most influential in your model’s predictions.

It’s important to strike a balance between model complexity and interpretability based on the project’s objectives. In some cases, a trade-off may be necessary, and you may need to communicate the limitations of highly complex models while providing interpretable insights. Additionally, addressing data quality issues at the source is crucial for building trustworthy and interpretable machine learning models.

What is the future direction for this research?

Improved Decision-Making – Predictive models provide decision-makers with valuable insights and foresight. Informed decisions based on accurate predictions can lead to better outcomes.

Resource Allocation – Predictive analytics helps allocate resources more efficiently. Risk Management – Predictive models are crucial in risk assessment and mitigation.

can you list various pitfalls or learnings from this research that can form base to enhance for future research?

While deep learning has shown promise in improving weather prediction, there are several pitfalls and challenges associated with its use in this domain:

- *Data Quality and Availability*: Deep learning models require vast amounts of high-quality data. Weather data can be noisy, incomplete, or inconsistent, and obtaining long-term historical data can be challenging.

- *Data Imbalance*: Weather events, such as extreme storms or hurricanes, are relatively rare compared to normal weather conditions. Imbalanced datasets can lead to biased models that struggle to predict rare events accurately.

- *Computational Resources*: Deep learning models, especially large neural networks, demand significant computational power for training and inference. This can be cost-prohibitive and limit accessibility in some regions.

- *Overfitting*: Deep learning models are prone to overfitting when trained on limited data. Weather datasets often have temporal dependencies, and overfitting can occur if not properly addressed.

- *Interpretability*: Deep learning models are often considered “black-boxes,” making it challenging to interpret their decisions. This can be a critical issue in weather forecasting, where understanding the reasoning behind predictions is vital.

- *Hyperparameter Tuning*: Finding the right hyperparameters for deep learning models can be time- consuming and computationally expensive. Weather forecasting models often require specialized hyperparameter tuning.

Some more stuff about Venkata Ravi Kiran Kolla

Research Focus:

Venkata Ravi Kiran Kolla specializes in Deep Learning Technology, a discipline that lies at the forefront of cutting-edge technological advancement. His work encompasses a wide array of topics supporting the betterment of society.

Pioneering Achievements:

Over the course of 16 years, has authored and co-authored 24 research papers and articles, many of which have been published in top-tier journals and presented at prestigious conferences. Their pioneering work has earned them recognition in the AI/ML community.